The 'Live but Dead' Diagnosis

There is a specific silence that falls over a boardroom three months after a major data platform 'go-live.' The migration is complete. The legacy Netezza or Hadoop appliances are decommissioned. The System Integrator (SI) has high-fived the CIO and left the building. Yet, the Databricks Usage Report shows a flatline.

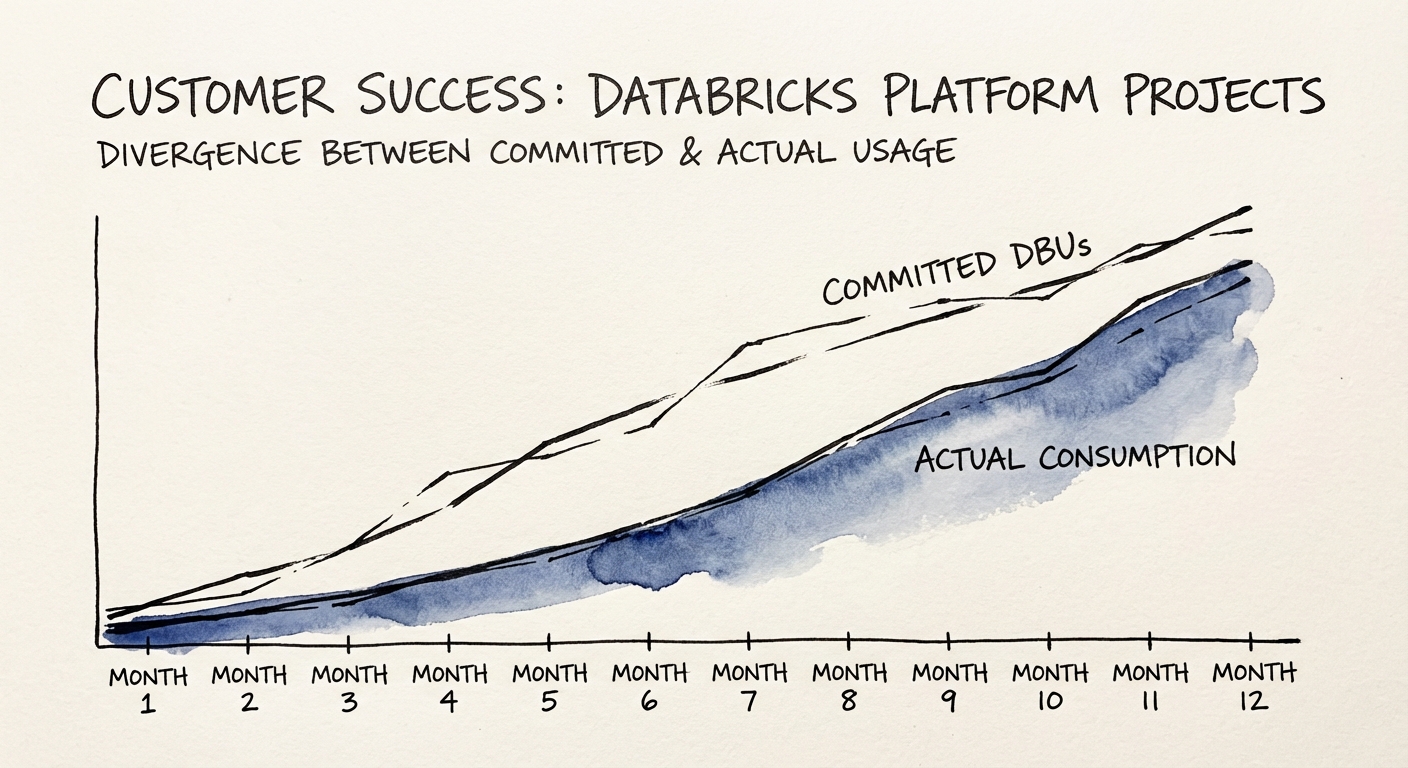

We call this the Databricks Consumption Gap. In our analysis of Series B and C data platform investments, we see an average 58% gap between committed Databricks Units (DBUs) and actual, value-generating consumption in the first year. The platform is technically live, but commercially dead.

The root cause is rarely the technology. Databricks is the Ferrari of data platforms. The problem is that your organization is driving it like a 2005 Corolla. Most 'failures' stem from a Lift and Shift mentality where legacy technical debt—and more importantly, legacy processes—are simply re-platformed into the cloud. You have moved your data silos from on-premise servers to the Lakehouse, but you haven't changed how the business consumes that data.

The 'Build It and They Will Come' Fallacy

Engineering teams often measure success by Migration Velocity (tables moved per week). The business measures success by Insight Velocity (time to answer a new question). When these two metrics disconnect, you get the Consumption Gap. Your engineers are celebrating a 'successful migration' of 5,000 tables, while your marketing team is still exporting CSVs to Excel because they don't know how to query the 'Silver Layer' in the Lakehouse.

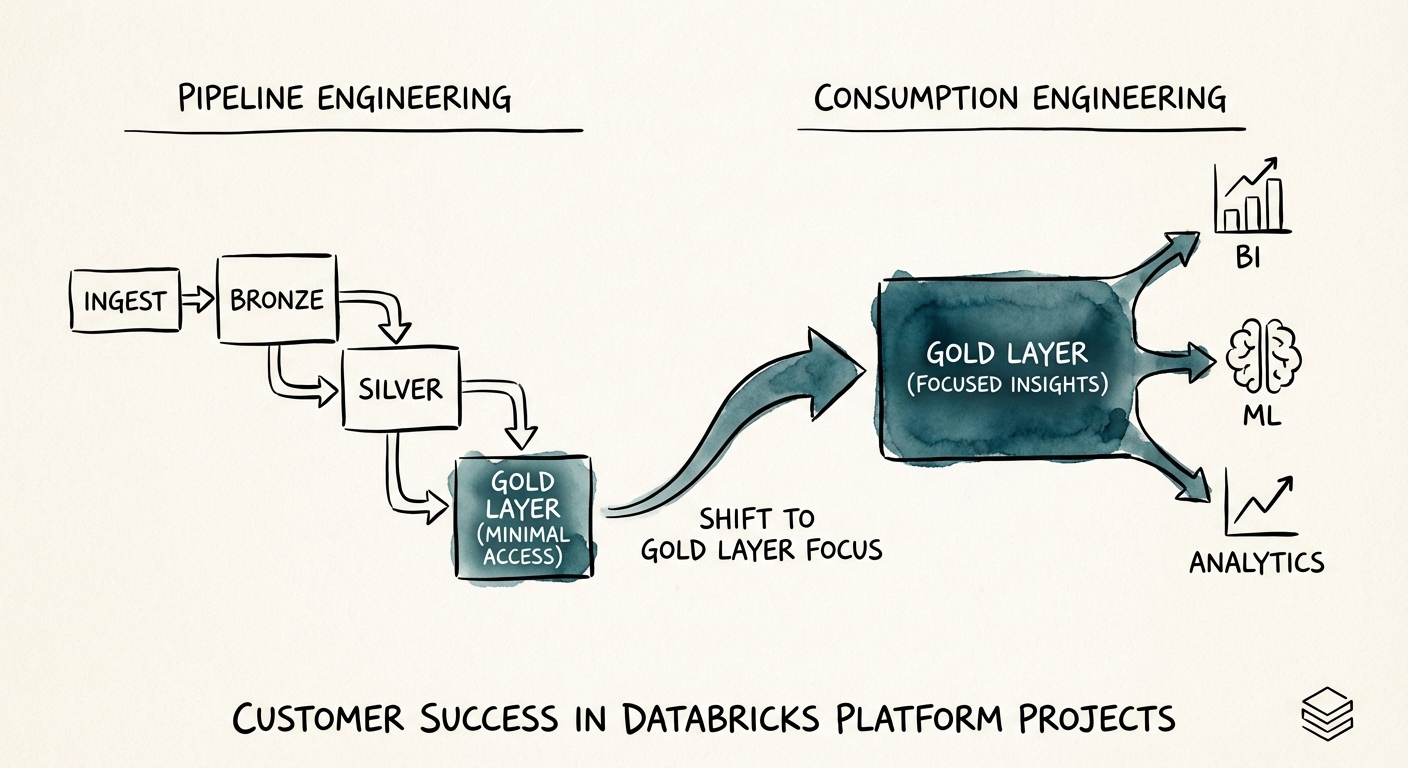

From Pipeline Engineering to Consumption Engineering

To close the gap, you must pivot your operational focus from 'delivering pipelines' to 'delivering consumption.' This requires a fundamental shift in your process documentation and success metrics. It starts with the Unity Catalog.

Too many implementations treat Unity Catalog as a technical governance checklist item to be 'configured later.' This is a fatal error. Unity Catalog is not just a security tool; it is your internal Data Marketplace. Without a well-documented, business-friendly catalog, your data assets are invisible. In the Lakehouse era, discoverability is adoption.

The 80/20 Inversion Rule

Audit your engineering sprint tickets for the last quarter. You will likely find:

- 80% of effort: Bronze/Silver Layer ingestion (cleaning, moving, formatting data).

- 20% of effort: Gold Layer logic (building business-ready data products).

To drive consumption, you must invert this ratio. High-performing data teams automate the Bronze/Silver ingestion using declarative pipelines (like Delta Live Tables) and spend their scarce mental energy on the Gold Layer—defining the metrics, semantic models, and 'Data Products' that the C-Suite actually cares about.

The Process: Documenting for the Consumer

Your process documentation needs to graduate from technical runbooks to Data Product Specifications. Every dataset in the Gold Layer must have a documented 'Product Sheet' covering:

- The Business Question: What problem does this table solve?

- The Latency SLA: When is the data ready (e.g., 'Daily at 8:00 AM EST')?

- The Trust Score: A calculated metric of data quality and freshness.

- The Sample SQL: Copy-paste code snippets for common business queries.

The Partner Selection Pivot

If you are relying on external partners to build your Databricks capability, you need to change how you vet them. The 'Body Shop' model—where partners bill by the hour for warm bodies to write PySpark code—is incentivized to prolong the build, not accelerate the consumption.

When evaluating a System Integrator, ask for their Consumption Engineering Playbook. If they show you a Gantt chart of a migration, walk away. You are looking for a partner who talks about:

- Use Case Backlogs: Do they start with the business questions or the schema migration?

- Enablement Workshops: Do they train your analysts on Databricks SQL, or just hand over the keys?

- The 'Consumption Architect': A role that bridges the gap between Data Engineering and Product Management.

The Private Equity Angle

For PE sponsors, the Consumption Gap is a hidden valuation killer. You are paying for the compute commit and the implementation services, but if the 'Usage' line on the P&L isn't growing, the asset isn't scaling. A flat Databricks bill isn't a sign of efficiency; it's a sign of a stalled product roadmap. When conducting technical due diligence, look past the architecture diagrams. Ask to see the Daily Active Users (DAU) of the platform. If the architecture is 'Modern Data Stack' but the DAU is single digits, you are buying a shelfware castle.