The "Black Box" Valuation Trap

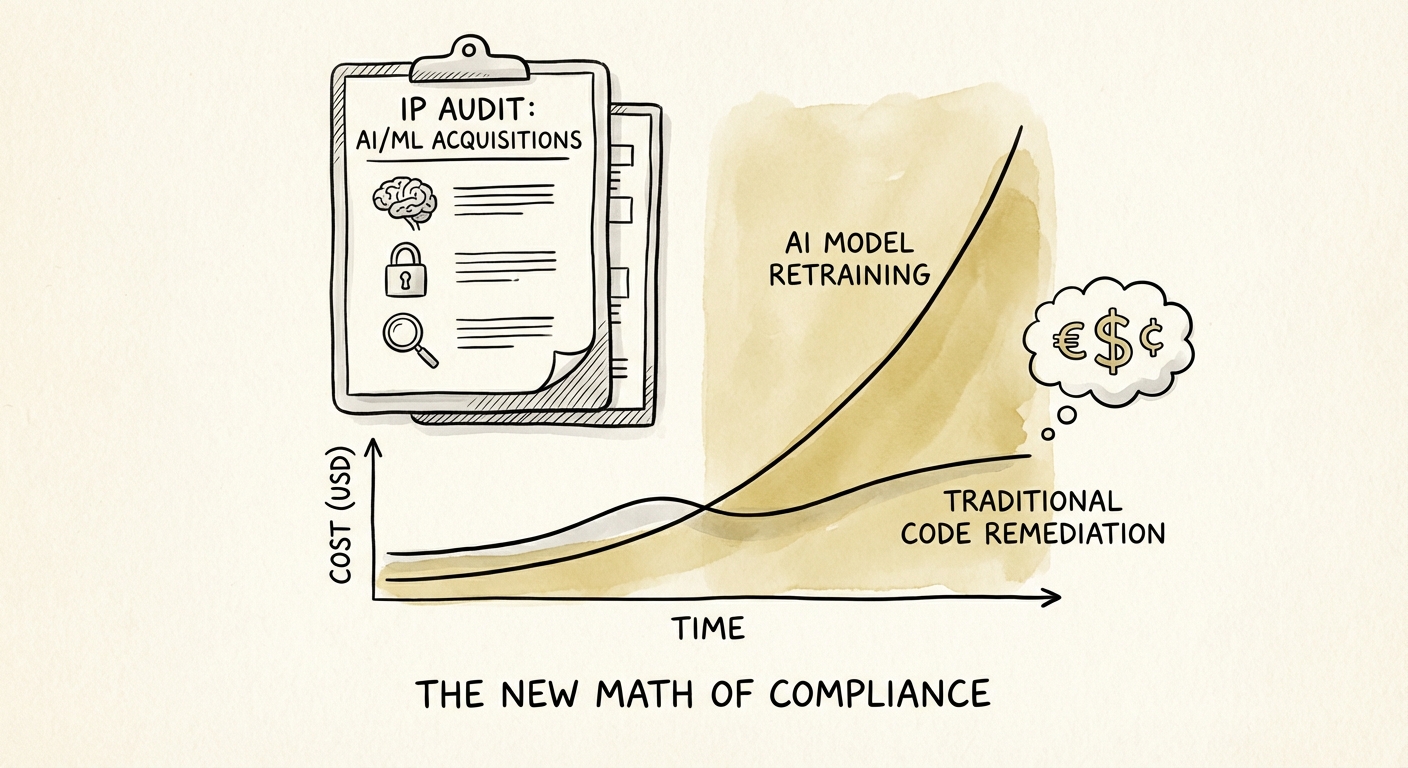

In traditional software M&A, intellectual property (IP) due diligence is deterministic. You scan the code, check the open-source licenses, and verify the copyright assignments. If there is a GPL violation, you remediate it by rewriting that module. The risk is contained.

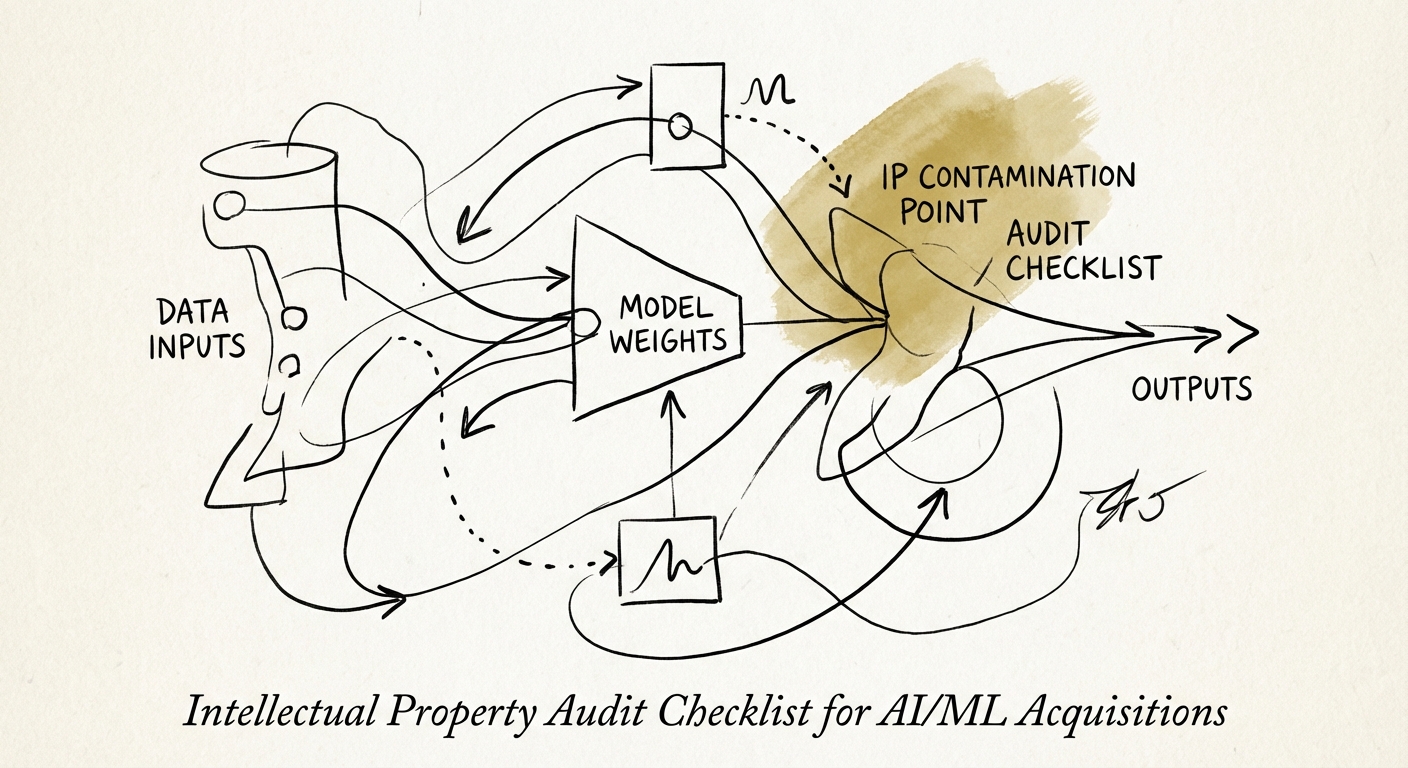

In AI/ML acquisitions, IP risk is probabilistic and contagious. You are not just acquiring code; you are acquiring weights—mathematical representations of patterns derived from vast datasets. If that underlying data is "poisoned"—harvested without consent, violating copyright, or containing viral open-source licenses—the entire model may need to be destroyed.

We call this the "Retraining Tax." Unlike a code rewrite, which might cost $50,000 in engineering hours, retraining a contaminated foundation model can cost $2M+ in compute and, more critically, 4-6 months of lost go-to-market time. According to Synopsys' 2024 OSSRA report, 66% of AI, Machine Learning, and Big Data codebases contain high-risk vulnerabilities or license conflicts. For a private equity sponsor, this means there is a better-than-even chance your AI target is sitting on a legal landmine that standard IP warranties won't cover.

The courts are already signaling that "disgorgement"—the deletion of models trained on illicit data—is a real remedy. If you buy an AI company whose core asset is a model trained on scraped, copyrighted data, you aren't buying an asset; you're buying a liability.

The 4-Layer AI IP Audit Framework

Standard IP checklists fail in AI deals because they focus on code ownership while ignoring data provenance. To protect deal value, you must audit four distinct layers of the AI stack.

Layer 1: Data Provenance (The Input)

This is the highest risk vector in 2026. You must trace the lineage of every dataset used to train the model.

- Scraping Consent: Did the target ignore

robots.txtfiles? New case law suggests this removes "fair use" defenses. - PII Contamination: Does the training data contain Personally Identifiable Information? Under GDPR and CPRA, "unlearning" a specific user's data often requires a full model retrain.

- License Compatibility: Did they train a proprietary model using datasets licensed only for "Research Use" (e.g., certain academic datasets)?

Layer 2: Model Architecture & Weights (The Engine)

Even if the code is custom, the starting point often isn't. Many startups fine-tune open-source models (like Llama 2, Mistral, or Falcon).

- Viral Licenses: Are they using AGPL-licensed libraries in their inference engine? If so, your entire proprietary SaaS platform might legally need to be open-sourced.

- Commercial Use Restrictions: Some "open" models prohibit commercial use if you have over 700M monthly users or compete with the model creator.

Layer 3: The "Human-in-the-Loop" Trap

The US Copyright Office has repeatedly ruled that AI-generated content is not copyrightable. This creates a valuation gap: if your target's product is 100% AI-generated, they may own zero IP in their final output.

Diligence Question: Can the target prove significant human modification of AI outputs? If not, their defensive moat against competitors copying their content is non-existent.

Structuring Protection: The "Data Bill of Materials"

To mitigate these risks, investors must demand a Data Bill of Materials (DBOM) alongside the traditional Software Bill of Materials (SBOM). The DBOM should list every dataset, its source, its license, and the consent mechanism used.

If the target cannot produce a DBOM, you must assume the model is contaminated. In this scenario, we recommend three deal protections:

- The "Retrain" Escrow: Hold back 15-20% of deal consideration specifically to cover the cost of training a new model on "clean" data post-close.

- Specific Indemnity: Standard "IP non-infringement" reps are insufficient. Add specific indemnities for "training data copyright infringement" and "model disgorgement orders."

- Clean Room Protocol: If the target's IP is messy, consider an asset purchase of the team and architecture only, requiring them to retrain the model from scratch in a "clean room" environment before the deal closes.

For a deeper dive into assessing technical risks in M&A, review our guide on Technology Due Diligence Red Flags and our Cybersecurity & IP Assessment Framework.