The 'Code Quality' Fallacy in Due Diligence

Most private equity technology due diligence focuses entirely on the application layer. You hire a third-party firm to run automated scans on the codebase, checking for spaghetti code, security vulnerabilities, and open-source license compliance. The report comes back green: the code is clean, the libraries are updated, and the IP is secure. You sign the deal.

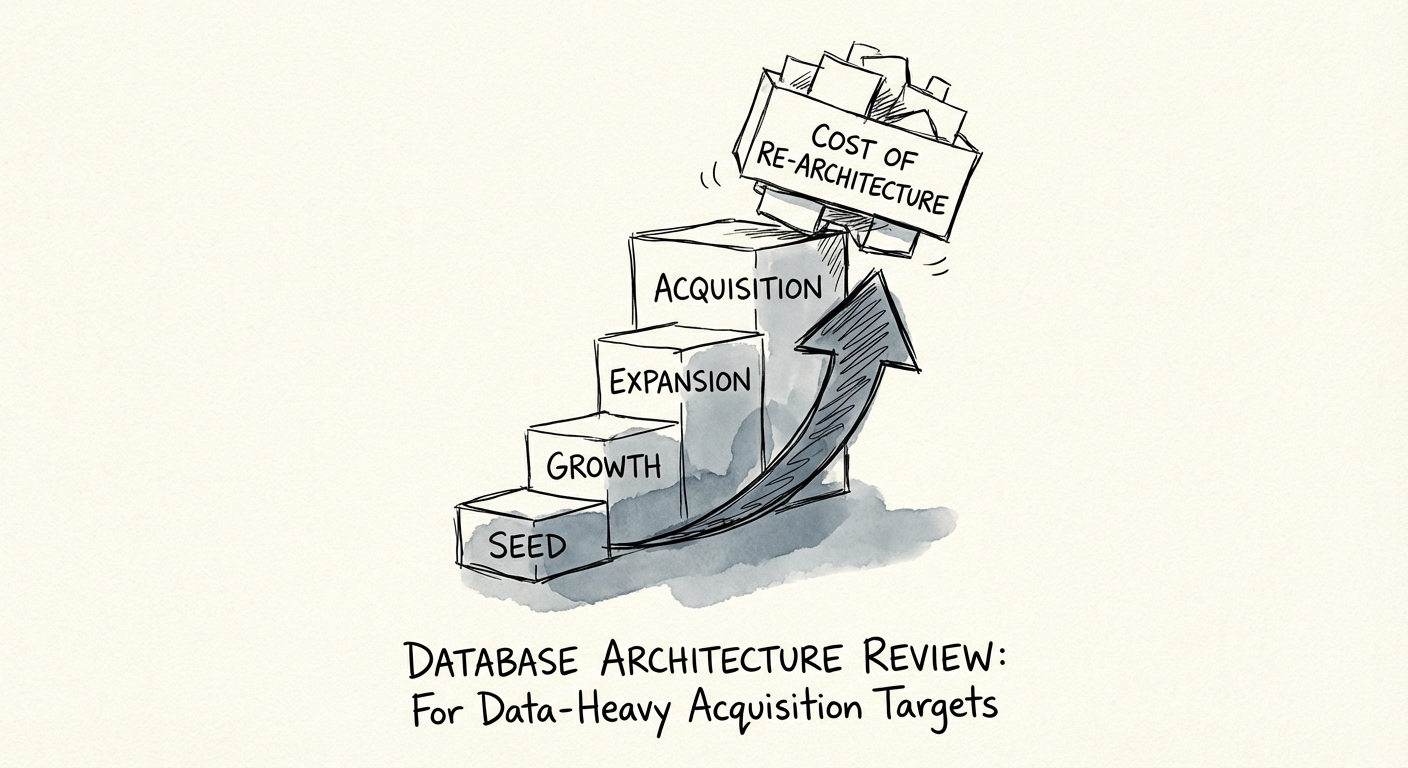

Six months later, the portfolio company lands a marquis enterprise customer. Volume doubles. Suddenly, the platform slows to a crawl. The 'clean' code is querying a database architecture that was never designed to scale beyond a Series A user base. The CTO breaks the bad news: you need a full re-platforming. The cost? $2 million and 12 months of stalled roadmap.

This is the Database Scalability Trap. While code quality is visible and measurable, database architecture flaws are often hidden 'technical debt' with a much higher interest rate. Unlike application code, which can be refactored module by module, a broken data model acts as 'data gravity'—it is massive, heavy, and extremely expensive to move. In fact, research indicates that 83% of data migration projects fail or exceed their budgets, often due to underestimated complexity in the underlying schema.

The Three Most Common Database Sins

When evaluating a data-heavy target—especially in Fintech, HealthTech, or Logistics—you aren't just buying code; you are acquiring a data model. If that model is flawed, your growth equity injection will be consumed by remediation, not sales efficiency.

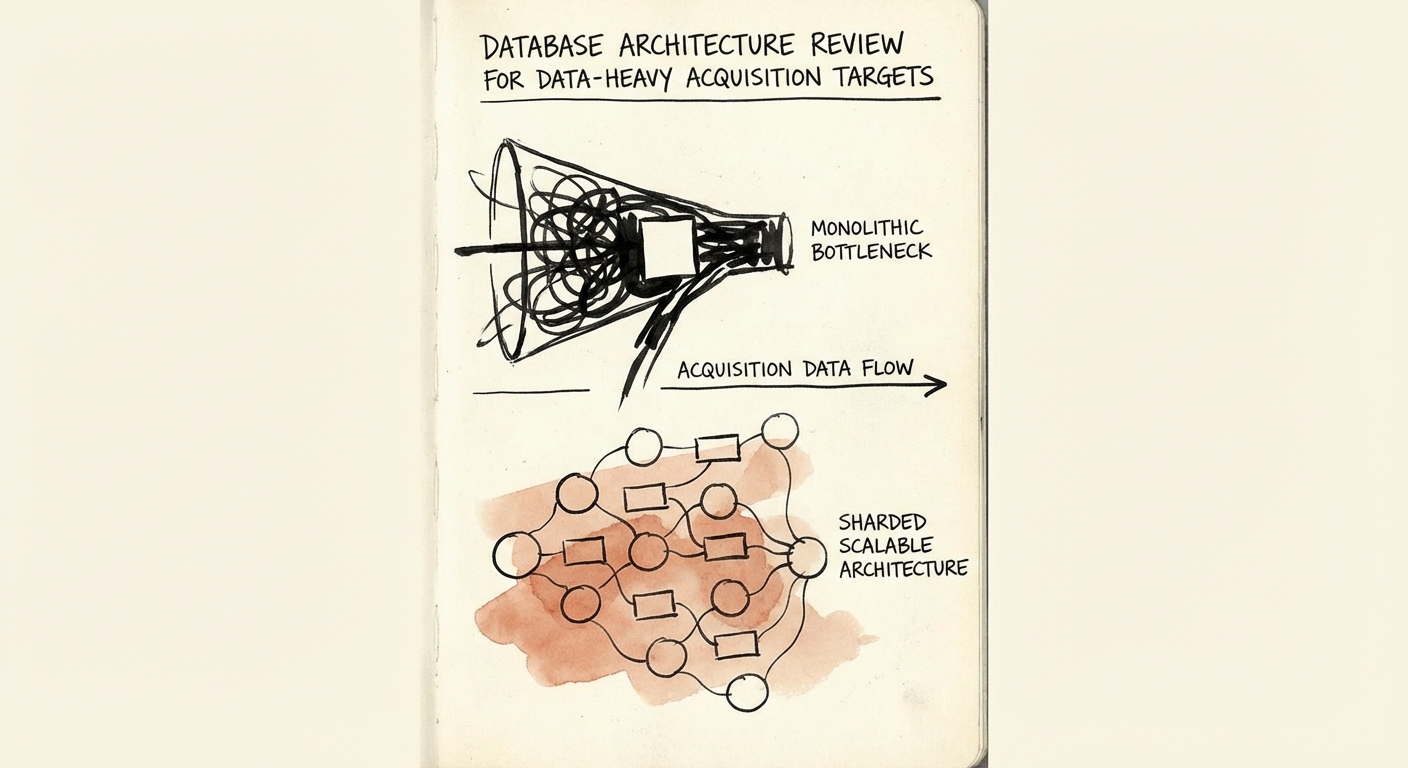

1. The Monolith Trap (Vertical Scaling Limits)

Many Series B companies are still running on a single, massive PostgreSQL or MySQL instance. This works fine for early growth, but it hits a hard physical ceiling. The symptom is distinct: the engineering team is constantly upgrading to larger AWS RDS instance sizes (up to db.r6i.24xlarge) rather than optimizing architecture. Eventually, you run out of bigger servers. If the target lacks a sharding strategy or a read/write split architecture, you are buying a ticking time bomb that will explode at approximately 5TB of data.

2. The 'NoSQL for Everything' Mirage

In the mid-2010s, it became trendy to use MongoDB or other NoSQL stores for everything to speed up development. If your target is using a document store for highly relational financial transactions (e.g., a ledger), you are in trouble. The 'flexibility' of NoSQL becomes a liability when you need strict ACID compliance for enterprise reporting. Remediation involves rewriting the entire backend to enforce data integrity that the database engine should have handled.

3. The 'Stored Procedure' Black Box

This is common in older B2B software targets. Business logic—pricing rules, permission checks, workflow triggers—is buried inside the database itself using PL/SQL or T-SQL stored procedures, rather than in the application code. This makes the database a 'black box' that is impossible to migrate to a modern cloud-native environment without a total rewrite. It locks you into legacy vendors (Oracle, MS SQL Server) and kills your ability to modernize the stack.

The Due Diligence Diagnostic: 5 Questions to Ask

Don't settle for a high-level architecture diagram. To expose these risks, you need to ask specific questions that reveal the maturity of the data layer. If the CTO cannot answer these clearly, budget for a post-close remediation project.

- "What is your read/write split strategy?" (If they send all traffic to one primary node, they aren't ready for enterprise scale.)

- "Do you use stored procedures for business logic?" (Any answer other than "No" is a red flag for scalability and migration costs.)

- "What is your sharding key?" (For SaaS platforms, if they haven't defined how they split tenants across servers, multi-tenancy is a lie.)

- "Show me your slow query log statistics for the last 30 days." (Look for queries taking >1 second. If there are many, the index strategy is broken.)

- "Have you tested a restore from backup in the last quarter?" (Scalability means nothing if data durability is theoretical.)

Identifying these risks pre-deal allows you to factor the $1M-$3M remediation cost into your valuation or required working capital adjustments. Ignore them, and you'll pay the price in the first board meeting after the crash.

For deeper due diligence frameworks, review our guides on technical due diligence red flags and quantifying technical debt in dollar terms.