The 'Lift and Shift' Liability: Why Your Target's Data Lake is a Swamp

In the rush to exit on-premise Hadoop clusters or legacy cloud data warehouses, many lower-middle-market companies treat Databricks as a dumping ground rather than a unified data platform. The result is a specific flavor of technical debt that is invisible on the P&L but devastating to post-close EBITDA: Architectural Inefficiency.

The primary red flag in due diligence is the "Interactive Default." In a well-architected environment, production pipelines run on Jobs Compute clusters, which are ephemeral, cheaper, and terminate immediately after task completion. In debt-laden environments, we consistently see production ETL (Extract, Transform, Load) workloads running on All-Purpose (Interactive) Compute clusters.

Why does this matter? Databricks prices its "DBUs" (Databricks Units) differently based on workload type. Interactive clusters—designed for data scientists to write code in real-time—cost roughly 4x more per minute than automated Job clusters. When a target company lifts and shifts legacy code without refactoring it for the Databricks control plane, they effectively pay a "laziness tax" of 300% on every gigabyte processed. During due diligence, request a breakdown of DBU consumption by cluster type. If "All-Purpose" accounts for more than 20% of total compute in a mature company, you are buying a remediation project, not a platform.

The DBU Bleed: Quantifying the 'Idle Cluster' Tax

The second major source of technical debt in Databricks implementations is Cluster Sprawl and Idle Time. Unlike traditional data warehouses (like Snowflake) which have mastered auto-suspend, Databricks environments—especially those managed by smaller teams—often rely on manual cluster management or poorly configured auto-termination policies.

Our audits of portfolio companies utilizing Databricks reveal that approximately 15% to 20% of total monthly spend is attributed to clusters running with zero active workloads. This happens when data engineering teams create "permanent" clusters to avoid startup latency (roughly 3-5 minutes), essentially treating cloud infrastructure like a sunk-cost on-premise server.

Furthermore, technical debt manifests in oversized driver nodes. In legacy implementations, engineers often over-provision cluster nodes "just to be safe," selecting memory-optimized instances for CPU-bound tasks. This creates a linear correlation between revenue growth and infrastructure cost—a unit economics disaster. When evaluating a target, calculate the DBU-to-Revenue Ratio over the last 12 months. In a healthy SaaS or data-enabled services company, this ratio should decrease as economies of scale kick in. If it remains flat or increases, the platform is suffering from significant technical debt.

The Unity Catalog 'Refactoring Cliff'

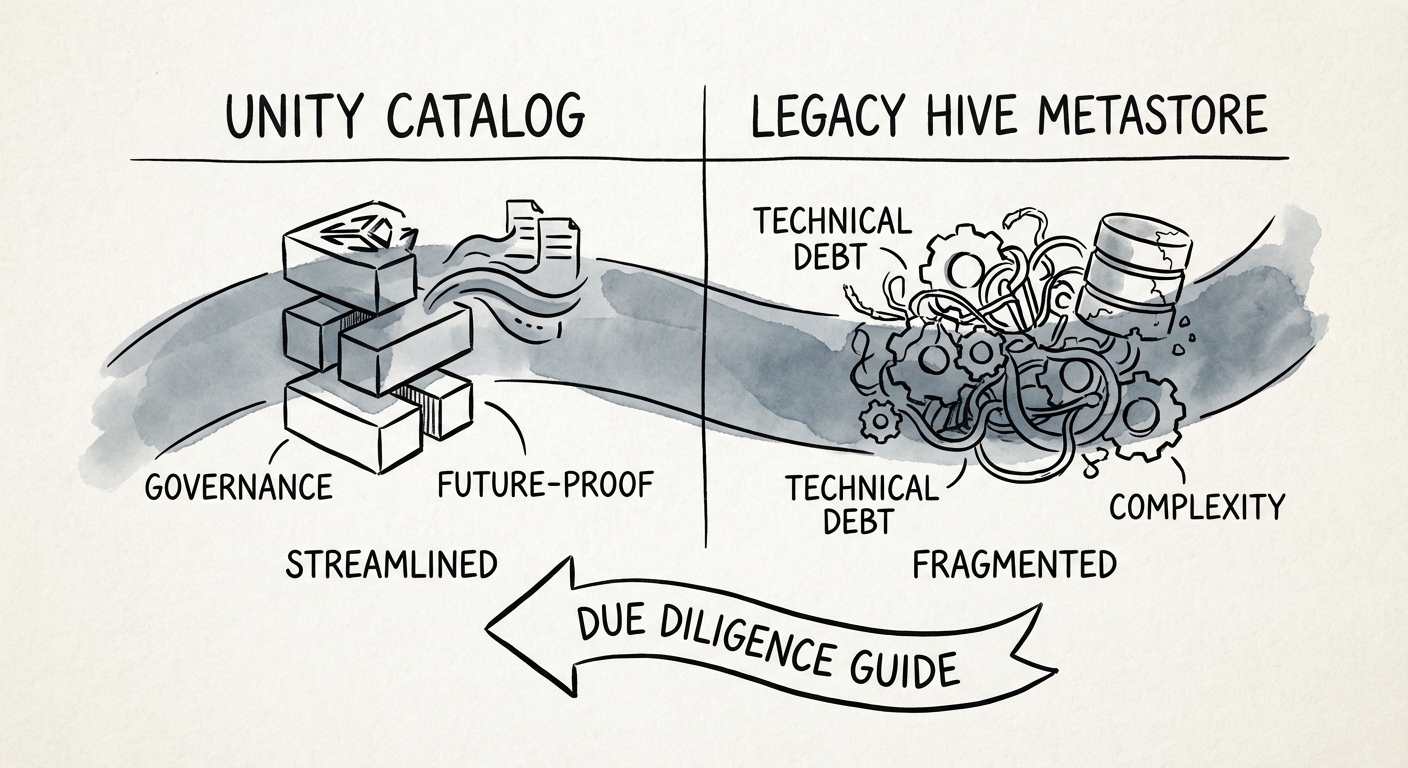

The most significant hidden liability in 2026 Databricks diligence is the migration status to Unity Catalog (UC). Databricks has fundamentally shifted its governance model from the legacy Hive Metastore (HMS) to Unity Catalog. While UC offers superior governance, lineage, and security, the migration is not automatic.

Targets that still rely on legacy Hive Metastore or direct file path access (mounting S3/ADLS buckets directly to the workspace) are sitting on a CapEx bomb. Migrating to Unity Catalog requires:

- Rewriting hard-coded file paths in thousands of notebooks.

- Refactoring permissions models from the workspace level to the account level.

- Upgrading cluster runtimes and testing for breaking changes.

We estimate the cost of this migration for a mid-sized data organization ($20M-$50M ARR) to be between $150,000 and $300,000 in services fees or lost engineering capacity. If your target has not yet migrated to Unity Catalog, you must underwrite this cost in your 100-day plan. Treat the absence of Unity Catalog not just as a feature gap, but as measurable technical debt that will delay any advanced AI/ML initiatives by at least two quarters.