The Ingestion Mirage: Paying for Digital Exhaust

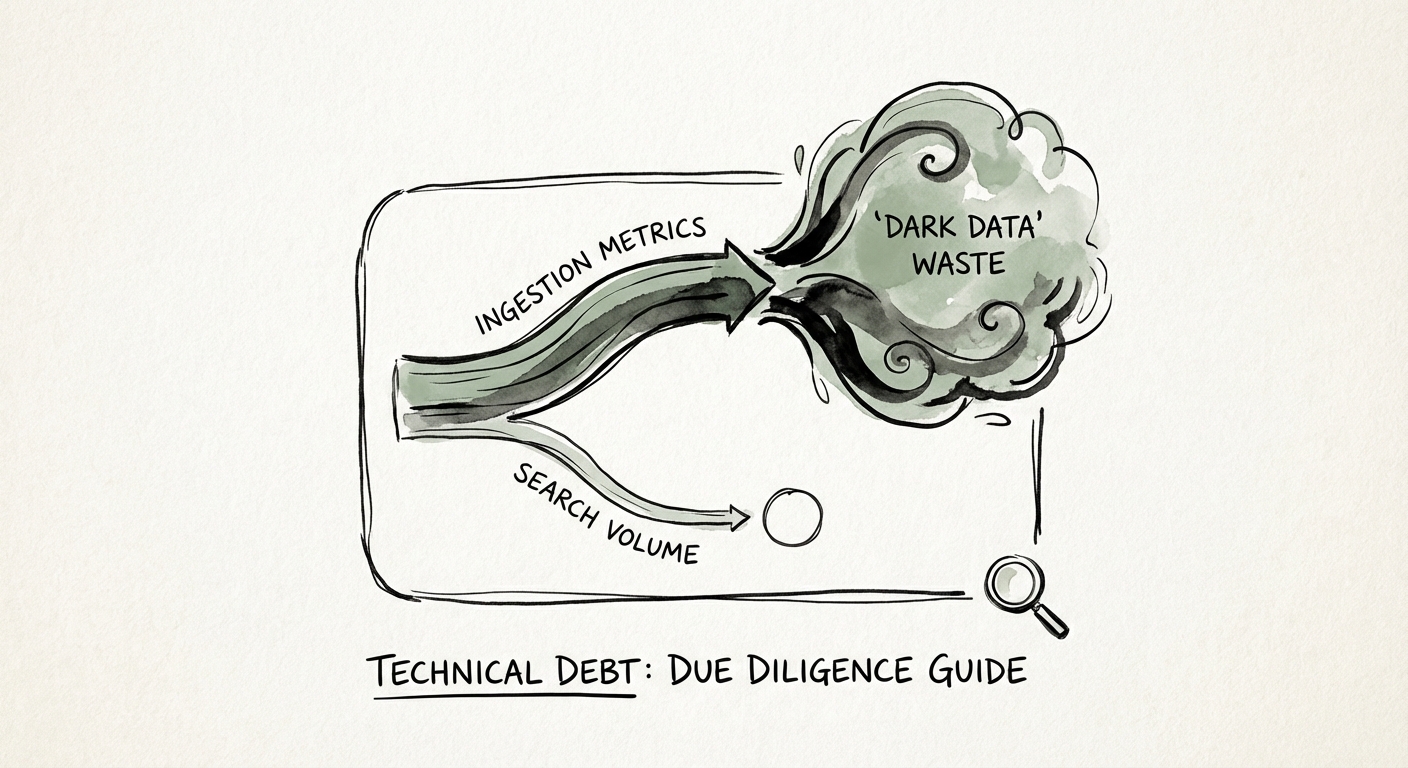

In the world of technology due diligence, Splunk is often treated as a badge of honor—a sign that the target company takes security and observability seriously. The reality, however, is often a sprawling, seven-figure liability hidden in plain sight. Our audits consistently reveal that 55% of data ingested into Splunk is 'Dark Data'—logs that are indexed, stored, and paid for, but never once queried by a human or an automated alert.

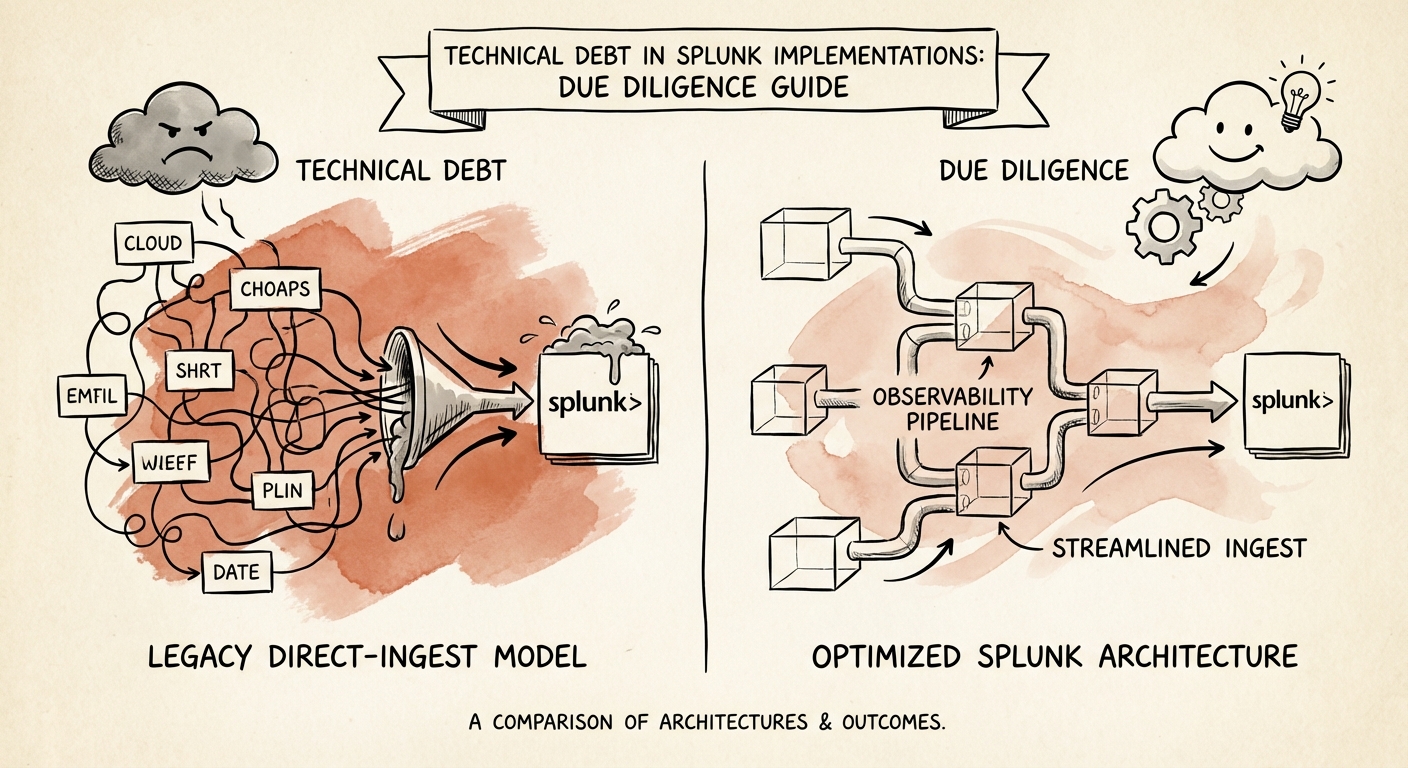

This isn't just operational inefficiency; it's direct EBITDA erosion. Under the legacy "Ingest Pricing" models, you are paying a premium to store debug logs, verbose error messages, and redundant network telemetry that provides zero business value. I recently reviewed a SaaS platform where 40% of their daily ingest volume came from a single, chatty firewall setting that had been left on "Debug" mode for three years. That mistake cost them $180,000 annually in licensing and infrastructure.

The Cisco Renewal Risk

The financial risk is compounded by Cisco's acquisition of Splunk. Market analysis indicates that legacy contract renewals are seeing price increases of 20-30% as customers are pushed toward "Workload Pricing" (vCPU-based) or forced onto new tiers. If your target is currently enjoying a legacy "sweetheart deal," that discount will likely evaporate upon change of control. During diligence, you must model the Splunk line item not at its current run rate, but at the impending "Cisco Market Rate."

The SPL Spaghetti: Operational Debt in the Search Bar

Beyond the financial waste of over-ingestion lies the insidious problem of "SPL (Search Processing Language) Debt." Splunk is powerful because it allows users to do almost anything with data at search time. This is also its Achilles' heel. In immature environments, we find core dashboards relying on massive, CPU-hungry regular expressions (Regex) executed every time a user loads a page, rather than extracting fields efficiently at index time or using data models.

This is the difference between a query that runs in 2 seconds and one that runs in 2 minutes. When a target company's security operations center (SOC) complains about "slow dashboards," the root cause is rarely the hardware; it's the code. We frequently see the "join" command used improperly, which silently truncates results after 50,000 events. This means your target's security alerts might be technically functioning but statistically blind to actual threats.

The 'Hero' Dependency

This technical debt creates a dangerous personnel dependency. In 80% of the environments we audit, the entire Splunk architecture is held together by one "Hero Architect" who understands the labyrinth of unoptimized saved searches and lookup tables. If that person leaves post-acquisition—and they often do—the system doesn't just degrade; it stops delivering value entirely. You aren't just buying software; you're buying a customized, undocumented Rube Goldberg machine.

The Audit: 5 Questions to Ask Before Closing

You don't need to be a Splunk Architect to spot the rot. Ask these five questions during your technical diligence sessions to reveal the true state of the implementation:

- "What is your Ingest-to-Search Ratio?" If they can't answer this, they don't know what they're paying for. A healthy ratio involves searching a significant portion of what is ingested. If they ingest 1TB/day but only search 50GB, you are buying waste.

- "Show me the 'index=_internal' audit logs for skipped searches." High numbers of skipped scheduled searches indicate an overloaded system where alerts are failing silently. This is a massive security compliance risk.

- "Are you using Workload or Ingest pricing, and when does the contract expire?" If they are on Ingest pricing with a contract expiring in <12 months, add a 30% buffer to your opex model.

- "How many 'Orphaned' Knowledge Objects exist in the system?" Orphaned objects (reports/alerts owned by deleted users) break silently. A high count suggests a lack of governance and a messy "founder extraction" process.

- "Do you use an observability pipeline (like Cribl) before Splunk?" A "No" answer means they lack the control plane to filter data before paying for it, signaling a low-maturity operation.