The 'Lakehouse' Arbitrage is Dead. Welcome to the 'Data Intelligence' Era.

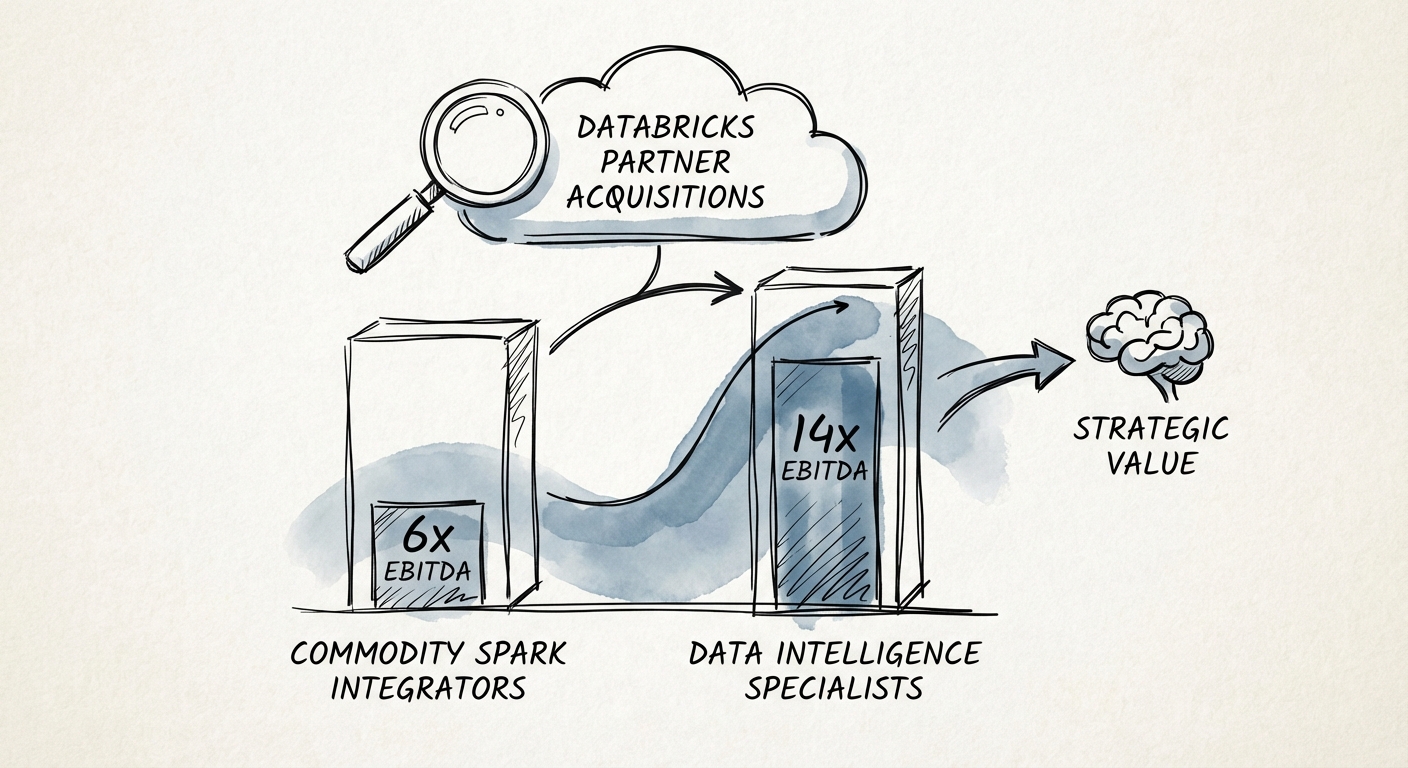

For the last five years, the private equity playbook for data consultancies was simple: buy a firm migrating on-prem Hadoop clusters to the cloud, slap a "Modern Data Stack" label on it, and ride the consumption wave. That trade is over. With Databricks hitting a $134 billion valuation in its Series L round and passing $4.8 billion in ARR, the ecosystem has bifurcated. The market no longer rewards "Spark jobs in the cloud."

In 2026, we see a massive valuation gap emerging. On one side, we have the Commodity Integrators—firms still focused on basic ETL, Delta Lake migrations, and "lift and shift" engineering. These assets are trading at 6x-8x EBITDA. They are effectively staffing agencies for data engineering.

On the other side are the Data Intelligence Specialists. These firms have pivoted to the "Agentic AI" era, leveraging the MosaicML acquisition and Unity Catalog to build proprietary, industry-specific outcomes. They aren't just moving data; they are building the governance and serving layer for enterprise AI. These firms are commanding 12x-15x EBITDA multiples, with strategic acquirers like Accenture and Deloitte paying even higher premiums for "Brickbuilder" validated IP.

The Valuation Driver: Consumption Influence vs. Billable Hours

The single most critical metric in 2026 isn't billable utilization; it's the Consumption Influence Ratio. Databricks' entire business model is based on Databricks Units (DBU) consumption. PE buyers are scrutinizing whether a partner's work sustains consumption or merely spikes it during implementation.

Partners who build low-quality pipelines that fail post-handover create "consumption churn." Databricks' partner managers actively steer leads away from these firms. In contrast, partners who implement Unity Catalog correctly embed themselves into the client's long-term governance strategy, ensuring perpetual DBU growth. This "sticky consumption" is what justifies the 14x multiple.

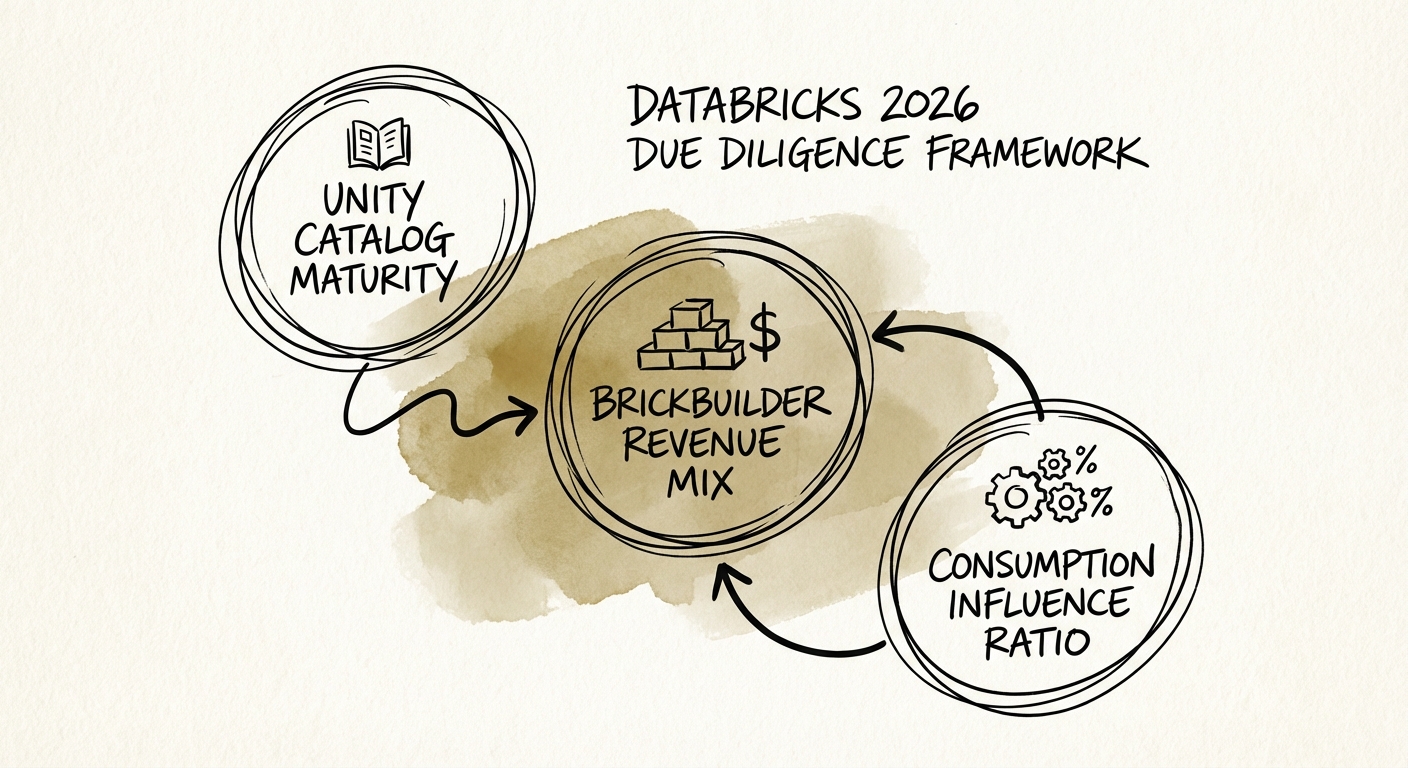

The 2026 Databricks Partner Due Diligence Framework

When evaluating a Databricks partner, standard financial diligence will miss the technical risks that destroy exit value. You need to audit the nature of their revenue against the Databricks product roadmap.

1. The 'Unity Catalog' Maturity Index

If the target firm is still deploying legacy Hive Metastore or unmanaged tables, they are building technical debt. The 2026 standard is Unity Catalog. It is the prerequisite for all advanced features, including Lakehouse Federation and Mosaic AI.

The Diligence Question: "What percentage of your active client base is fully migrated to Unity Catalog?"

- < 30%: Red Flag. This firm is deploying legacy architectures that will require expensive remediation.

- > 70%: Premium Asset. They are aligned with Databricks' strategic direction (Governance).

2. The 'Brickbuilder' Revenue Mix

Databricks' Brickbuilder Solutions program validates partner IP for specific industry use cases (e.g., "Retail Demand Forecasting" or "Healthcare Interoperability"). This is the difference between a "Body Shop" and a "Solution Provider."

The Diligence Question: "What percentage of revenue is attached to validated Brickbuilder Solutions vs. generic T&M?"

- 0% (No Brickbuilders): The firm has no defensible IP. Expect a 6x multiple.

- 20%+ (Validated Solutions): The firm has repeatable assets that compress sales cycles and improve margins. This justifies a 10x+ multiple.

3. The 'MosaicML' vs. 'OpenAI Wrapper' Test

With the $1.3B acquisition of MosaicML, Databricks made a clear bet on enterprises training and fine-tuning their own models, rather than just calling external APIs. Partners who only build "wrappers" around OpenAI are vulnerable to commoditization.

The Diligence Question: "Does the engineering team have production experience with Mosaic AI Model Training, or are they just using LangChain with GPT-4?"

A partner capable of helping a Fortune 500 company fine-tune a Llama 3 model on proprietary data using Databricks infrastructure is a rare, strategic asset. This capability commands the highest premium in the current market.

The 'Iceberg' Risk: Assessing Vendor Lock-In

The acquisition of Tabular (the creators of Apache Iceberg) by Databricks in 2024 fundamentally changed the storage wars. It signaled a move toward Delta Lake / Iceberg interoperability (UniForm). A partner who is dogmatic about "Delta only" and refuses to support Iceberg formats may find themselves locked out of modern, open-architecture enterprise deals.

Red Flag: The 'Hero Architect' Dependency

In Databricks consultancies, we often see the "Hero Architect" problem. A single technical leader understands the complex interplay of Photon engine, Auto Loader, and serverless compute. If this person leaves post-close, the firm's ability to deliver performant (cost-effective) solutions evaporates.

The Test: Request a code audit of the firm's last 5 major implementations. Are the notebooks modular, documented, and CI/CD integrated? Or are they 5,000-line monolithic scripts written by the "Hero"? If it's the latter, you aren't buying a company; you're renting a person who is about to cash out.

The Exit Strategy: Who is the Buyer?

The exit landscape for Databricks partners is robust but specific:

- Global SIs (Accenture, Deloitte): Buying for scale and Brickbuilder IP. They want "acqui-hires" of certified talent to feed their massive managed services contracts.

- Boutique Consolidators: Private equity-backed platforms rolling up regional data firms to build a "mid-market data powerhouse."

- Databricks Ventures: Occasionally invests in or acquires partners (like the Tabular deal) who solve a specific product gap, though this is rare for services firms.

To maximize value, the target must position itself not as a "services" firm, but as a "capability" that unlocks the Databricks Data Intelligence Platform. If they can demonstrate that they drive Net Revenue Retention (NRR) for Databricks, they become indispensable.