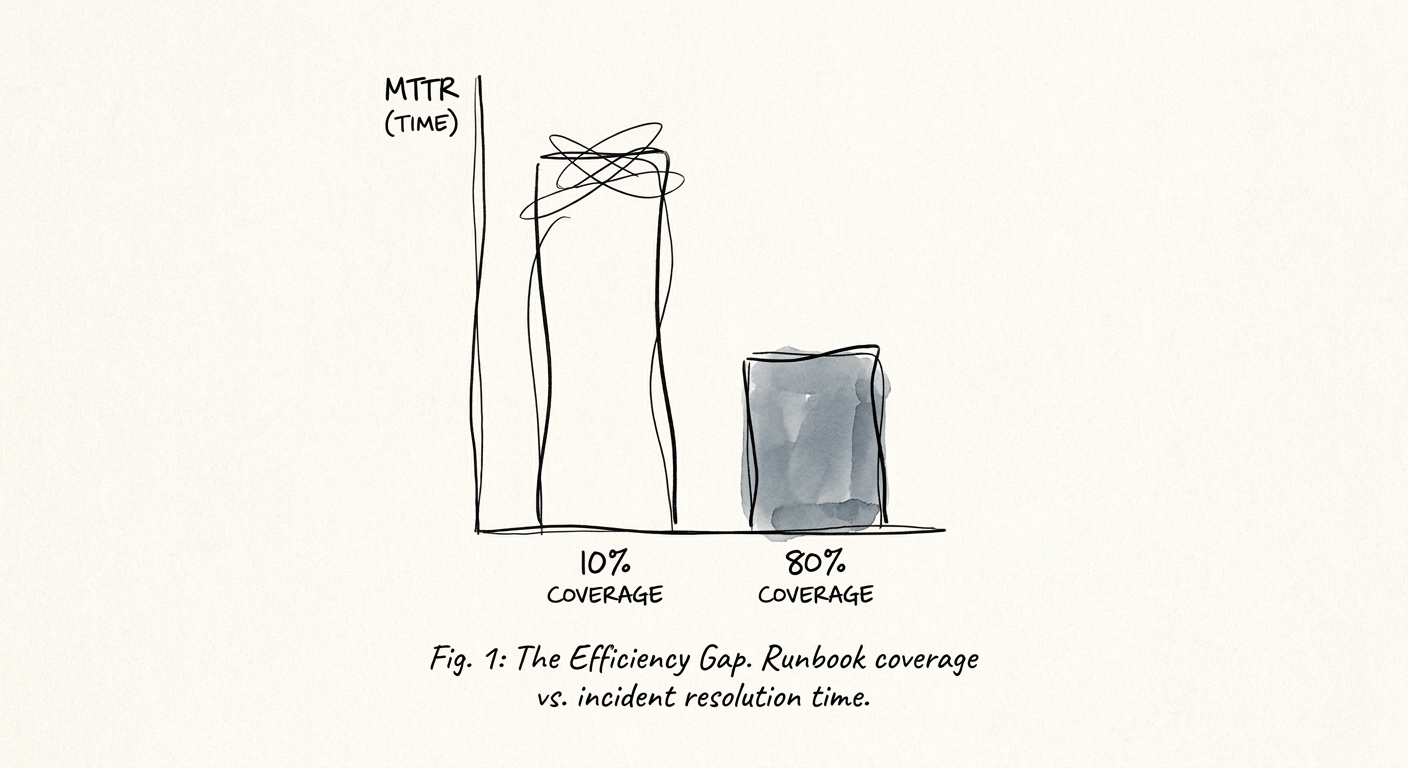

Every undocumented IT incident burns exactly 15 minutes and $210,840 before a single engineer even looks at a log file. That is the true cost of "hero culture" in modern software operations. When founders scale past $15M ARR, they obsess over driving down Mean Time to Resolution (MTTR). They buy expensive observability suites, restructure on-call rotations, and proudly track dashboard metrics in front of their board. But MTTR is a lagging indicator. The only leading indicator that actually predicts operational resilience—and the one private equity operating partners scrutinize most heavily during technical due diligence—is runbook coverage.

The Valuation Danger of Hero Culture

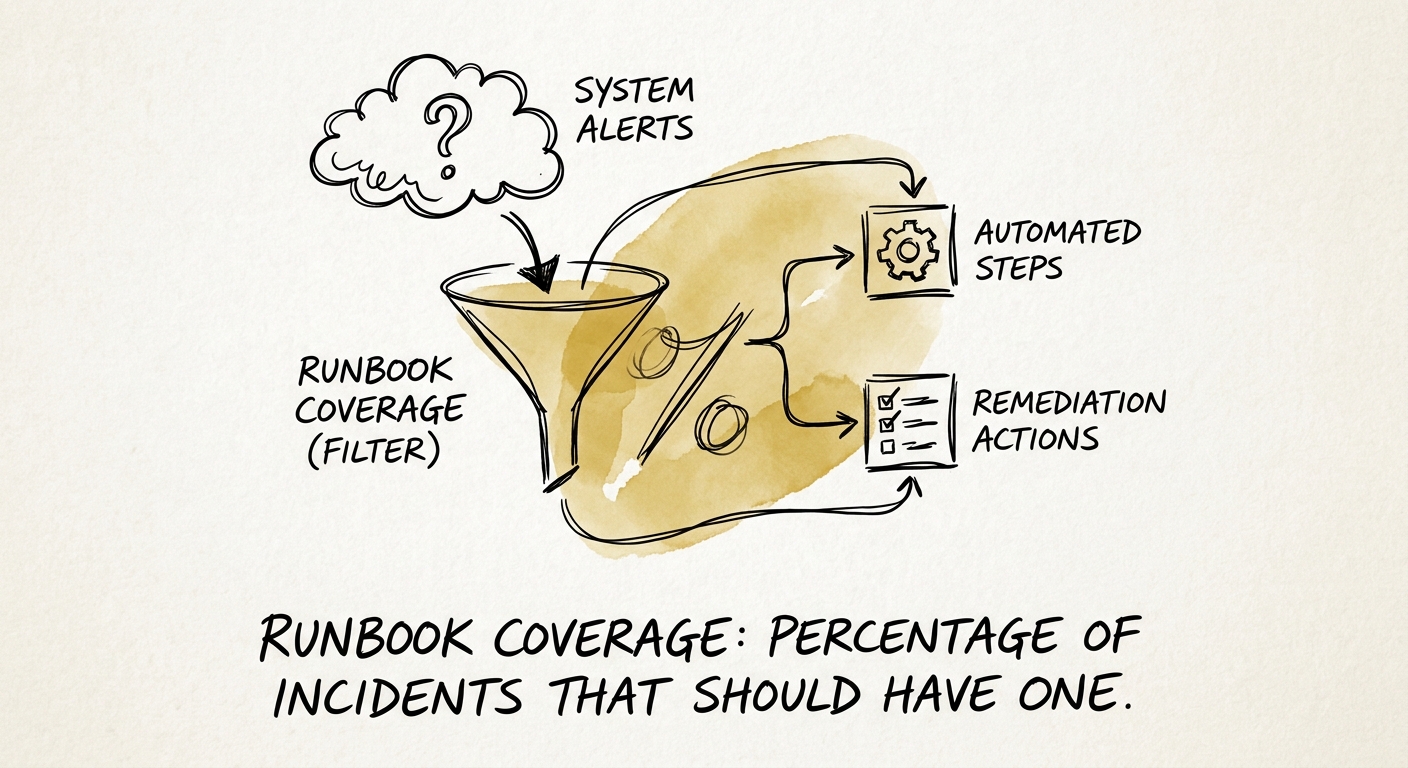

Runbook coverage is defined simply as the percentage of P1 and P2 incidents that are mapped to a predefined, executable workflow. If an alert fires and an engineer has to jump into a Slack channel to ask, "who knows how this database cluster actually works?" your operational process is fundamentally broken. In our last engagement, we audited a $30M B2B SaaS target boasting an "elite" 45-minute MTTR. On paper, they looked incredibly efficient. But their runbook coverage was hovering at a disastrous 12%. When we dug into the data, we found that 80% of high-severity incidents required the technical co-founder to personally triage the infrastructure.

Their impressive MTTR wasn't a product of mature engineering processes; it was a precarious byproduct of one key employee working 80-hour weeks to keep the lights on. That is a massive due diligence red flag that will immediately trigger a valuation discount during a transaction. Sophisticated acquirers do not pay premium multiples for unscalable heroics or tribal knowledge. They pay for documented, transferable systems that run independently of the original system architect. If your runbook coverage is below 80%, you are not running a resilient technology company; you are running a consultancy where the sole client is your own fragile infrastructure.

The Brutal Math of the Coordination Tax

The financial penalty for missing runbooks is staggering, yet completely invisible on a standard profit and loss statement. During a critical service outage, the absence of an executable runbook creates what site reliability engineers call a "coordination tax." Instead of immediately debugging the root cause, engineers toggle frantically between Slack threads, PagerDuty alerts, Jira tickets, and outdated Confluence pages trying to establish basic context. According to incident.io's 2026 State of Incident Response, this context-switching tax consumes a minimum of 15 minutes per incident.

When you map that systemic delay against the EMA/BigPanda 2024 IT Outage Cost Benchmark—which calculates the blended average cost of enterprise downtime at a brutal $14,056 per minute—that initial quarter-hour of fumbling costs over $210,000 in lost revenue, SLA penalties, and reputational damage. It is a completely unforced error that directly sabotages unit economics.

Automating the Remediation Path

Organizations that transition from static, decaying wiki pages to automated, executable runbooks fundamentally change this financial math. PagerDuty's 2025 Platform Benchmarks demonstrate that automating routine remediation actions—like safely restarting specific services or flushing overloaded caches—reduces MTTR for those tasks by an estimated 40 percent. Instead of humans executing dangerous CLI commands under extreme stress, the monitoring alert automatically triggers diagnostics, assigns roles, and offers one-click remediation buttons directly within the primary communication channel. Gartner's 2026 MTTR Reduction Analysis validates this exact operational shift, confirming that integrating automated context retrieval and human-in-the-loop remediation consistently cuts resolution times by over 40%. The difference between a minor operational blip and a board-level crisis is almost entirely dependent on whether the responding engineer has immediate, frictionless access to an up-to-date, actionable runbook.

Bridging the Documentation Gap Before Exit

So, how do you fix a systemic runbook deficit before taking your company to market? Stop trying to document everything all at once and start prioritizing by frequency and business impact. Target a minimum runbook coverage of 80% for your most common system alerts within the next 90 days. Begin by auditing your incident management platform to identify the top ten alert types that routinely disrupt your engineering team. If your developers spend more than 20% of their sprint capacity handling undocumented operational toil, your EBITDA margin is bleeding out through pure inefficiency.

Addressing this specific category of operational debt yields massive returns. McKinsey's IT Resilience Research found that when organizations systematically modernize their IT architecture and embrace documented incident practices, they reduce average resolution time for high-severity incidents by almost 60 percent within six months. To achieve this maturity, you must integrate runbook creation into your standard "Definition of Done" for all deployments. No code ships without an automated remediation workflow.

You must regularly stress-test these documents, because incident response plans fail the exact moment their underlying infrastructure drifts. If a runbook hasn't been executed or reviewed in 90 days, it is a liability. Building a comprehensive runbook library is not a tedious documentation exercise; it is an enterprise value creation strategy. Buyers demand operational maturity, and nothing proves technical resilience faster than an audited 90% runbook coverage metric backed by automated workflows.