The 'Go-Live' Illusion: Why 85% of Data Projects Stall

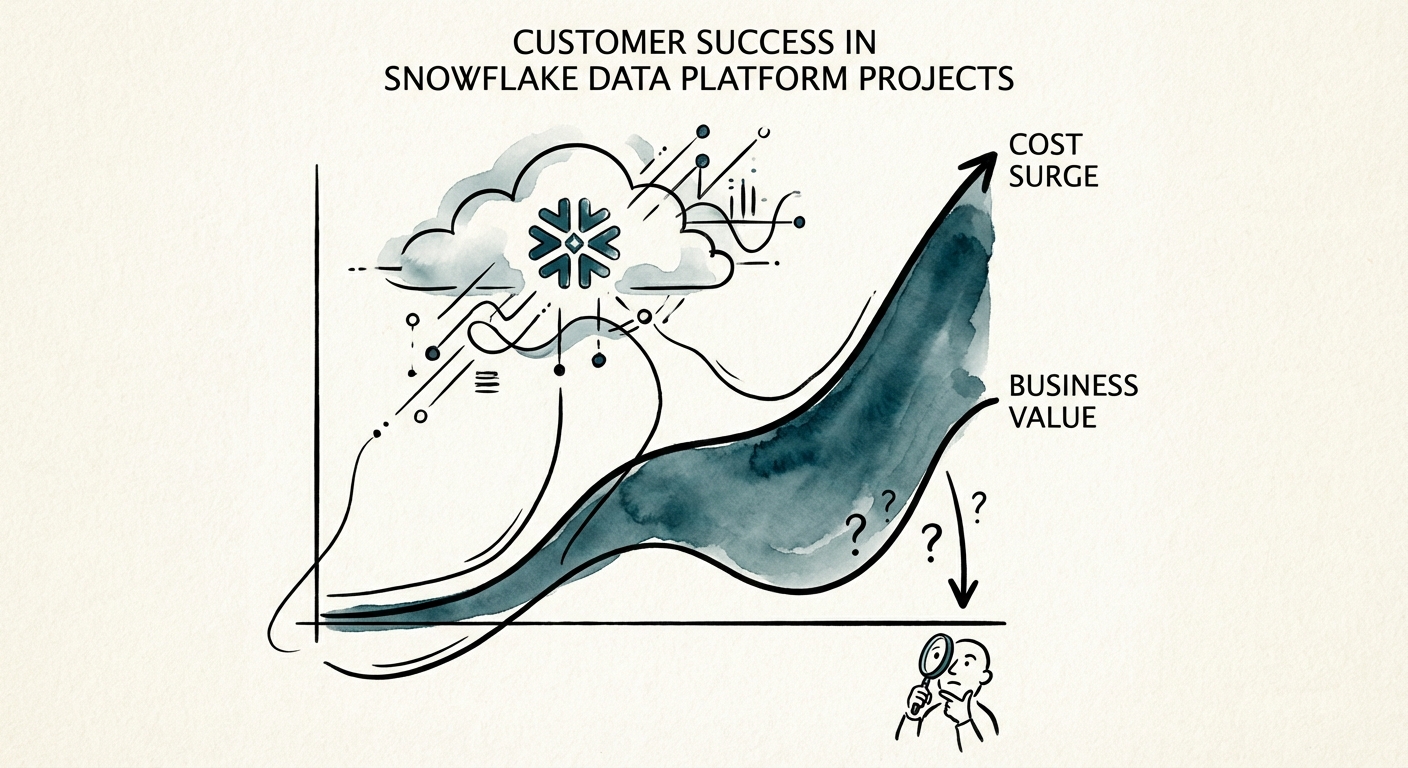

You signed the contract, migrated the data, and celebrated the "Go-Live." Your dashboard is green. But six months later, your CFO is asking why your Snowflake bill has quadrupled while your decision-making speed hasn't changed.

You are standing on the Consumption Cliff.

In 2025, the average enterprise Snowflake bill surged by 4x year-over-year, not because data volumes quadrupled, but because of inefficient consumption architectures. The industry secret is that 85% of big data projects fail to deliver their intended business value. They don't fail because the technology is broken; Snowflake is a Ferrari. They fail because you handed the keys to a driver who didn't know the track.

For scaling founders and executives, the pain is specific: you are paying for "Billware." Unlike on-premise "Shelfware" (software you bought but don't use), Billware is cloud infrastructure that you are using—furiously—but without extracting value. Queries run, credits burn, and invoices auto-pay, yet the business intelligence remains static.

The Visibility Gap

The root cause is rarely code; it is process. 80% of data management professionals admit they cannot accurately forecast their cloud costs. This isn't a budgeting error; it's a documentation failure. When implementation partners focus solely on "migration" (getting data from A to B) rather than "consumption" (getting value out of B), they build technical debt into the foundation of your platform.

The 3 Pillars of Consumption Failure

If you are currently evaluating partners or auditing a stalled project, look for these three red flags in your process documentation. If they are missing, your project is not an asset; it is a liability.

1. The 'Select *' Tax (Lack of Governance)

In a consumption-based model, bad habits cost real money. We recently audited a Series C SaaS company where a single, poorly written daily reporting query was costing $30,000 annually. The query scanned the entire database because no process documentation existed to define partition strategies or clustering keys for the engineering team. The "Select *" mentality is a relic of fixed-cost servers; in Snowflake, it is a hemorrhage.

2. The Zombie Warehouse (Idle Compute)

Snowflake charges for compute while the "warehouse" is running. By default, many implementations set auto-suspend times to 10 minutes or more to "optimize user experience." This is a trap. For 90% of workloads, a 60-second auto-suspend policy is sufficient. The difference between a 10-minute and a 1-minute suspend time can reduce your compute bill by 30-50% immediately. If your partner didn't document this configuration decision, they are spending your margin.

3. The Missing Business Map

The most fatal error is a lack of Value Mapping. Your documentation should link specific Snowflake Workloads to specific Business Outcomes. If you cannot point to a warehouse named "Marketing_Attribution_WH" and say, "This costs $4,000/month and generates $40,000 in ad spend optimization," you have failed. You simply have a "Big Data Project" that is burning cash.

The Fix: From 'Builder' to 'Architect'

Recovering from the Consumption Cliff requires a shift from heroics to systems. You do not need a smarter data engineer to write better SQL; you need a process that enforces efficiency by design.

The 'Consumption Architecture' Playbook

To stabilize your project and prepare for scale (or exit), implement these three documented processes immediately:

- The Tagging Taxonomy: Implement mandatory resource tagging. Every warehouse, pipe, and storage bucket must be tagged with a Cost Center, Project, and Owner. This turns your monthly bill from a black box into a P&L statement.

- The Quarterly Value Review (QVR): Stop reviewing activity (terabytes migrated) and start reviewing efficiency (cost per insight). If a dashboard costs $500/month to refresh but has 0 views, kill it.

- The 60-Second Rule: Hard-code aggressive auto-suspend policies into your Terraform or dbt configurations. Make "always-on" the exception, not the rule.

When searching for a partner, ask them one question: "Show me your process for managing consumption drift." If they talk about Python scripts or ETL tools, run. You want a partner who talks about Unit Economics, FinOps, and Value Realization.

Your data platform should be your greatest competitive advantage, not your largest variable cost. It’s time to stop paying for potential and start documenting profit.