AI Agent vs. Workflow Automation: Decision Guide

A decision guide for choosing an AI agent, internal copilot, or workflow automation for a business process.

Operations, IT, support, sales, finance, and business leaders deciding how much autonomy an AI workflow should have.

Use this when a team is tempted to call every AI workflow an agent.

Workflow automation

The process has clear rules, repeated steps, predictable inputs, and defined routing or approval paths.

Automating a broken process or hiding exceptions instead of escalating them.

Mapped workflow, automation rules, integrations, SOPs, and monitoring.

Internal copilot

A person needs help researching, drafting, summarizing, classifying, or retrieving information before making a decision.

Users treating suggestions as final output without review.

Assistant experience, source grounding, review standards, training, and quality sampling.

AI agent

The task requires multiple steps, tool use, and limited action-taking inside a bounded workflow with clear human approval rules.

Unsupervised actions, unclear permissions, weak logging, and high-impact decisions without review.

Agent role design, tool permissions, evaluation harness, logging, escalation, and monitoring.

How to make the call

- Step 1

Start with the workflow

Describe the current workflow before choosing automation, copilot, or agent.

- Step 2

Name the decision points

Separate low-risk routing or drafting from decisions that require human judgment.

- Step 3

Set permissions

Decide whether AI can read, draft, suggest, route, or write into systems.

- Step 4

Create test cases

Use expected examples and edge cases before letting AI operate in production.

- Step 5

Monitor after launch

Review quality, incidents, cost, user adoption, and exceptions before expanding autonomy.

Calling everything an agent creates avoidable risk.

The practical question is how much autonomy the workflow needs. Most businesses should earn autonomy in stages: retrieve, draft, recommend, route, then act only when permissions and review are ready.

Where the decision turns into work

Transaction Advisory Services

Operator-led buy-side and sell-side diligence for technology middle-market deals. Financial rigor, technical diligence, and integration risk in one workstream.

Performance Improvement

Revenue, margin, delivery, technical debt, and operating-system improvement for technology firms with stalled growth or compressed EBITDA.

Frequently asked

- Is every AI workflow an agent?

- No. Many valuable AI workflows are copilots or automations with human review, not autonomous agents.

- When should an agent take action?

- Only when the action is bounded, logged, reversible where possible, and reviewed based on risk.

- What is the safer first build?

- A reviewable copilot or workflow automation is usually safer before adding agent actions.

Articles that support the decision

BRIEF · PROCESS DOCUMENTATION

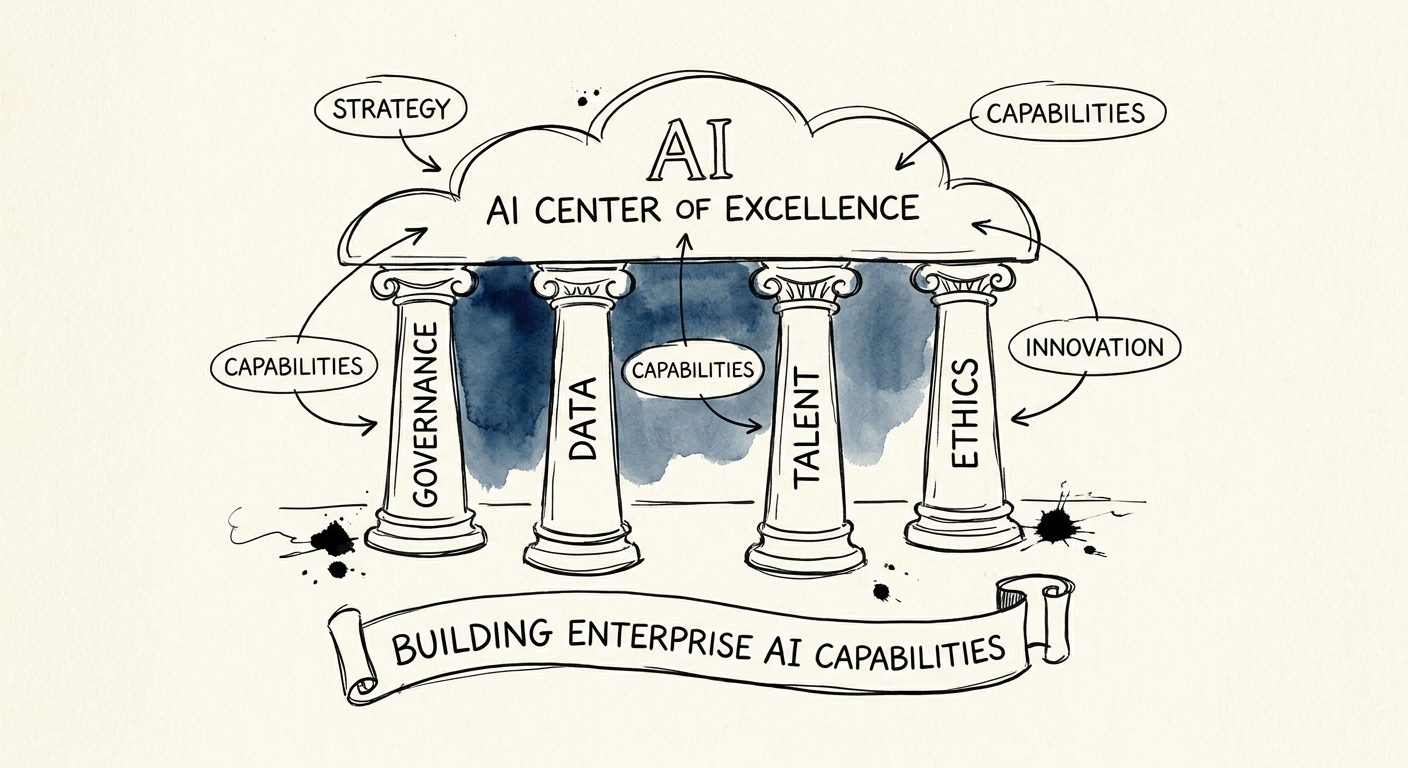

The AI Center of Excellence: Why Your Enterprise AI Needs Process Documentation, Not Just Engineers

Discover why building an AI Center of Excellence is a process documentation challenge, not just a technical one, and how it protects your valuation in M&A.

70% Budget burned in pilot phase without a CoE

BRIEF · TECHNICAL DEBT

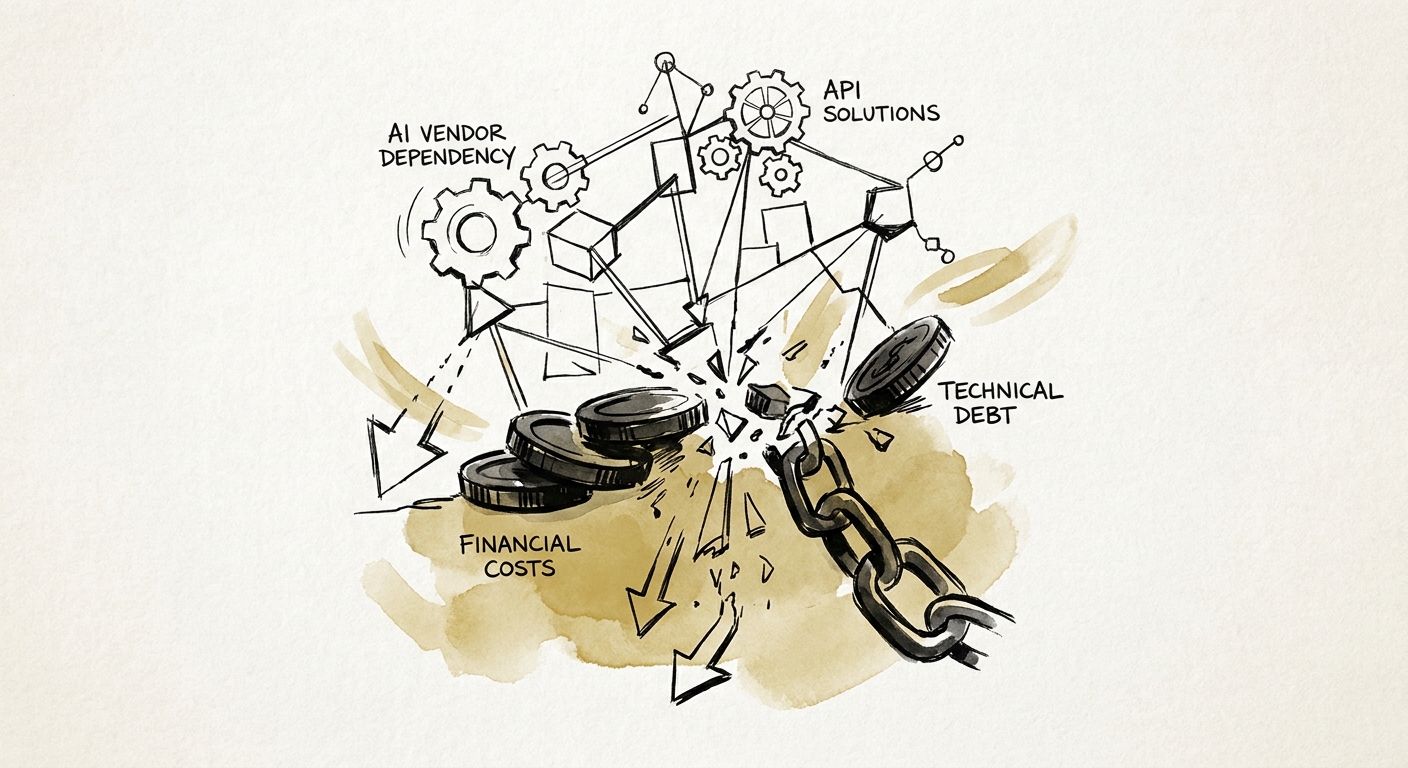

The AI Wrapper Trap: Why Vendor Dependency is Killing Your Deal Multiple

Private equity firms are overpaying for SaaS companies built on brittle AI APIs. Learn how to evaluate AI vendor dependency, model drift, and COGS risk in M&A.

349% Increase in AI Infrastructure COGS

BRIEF · TECHNICAL DEBT

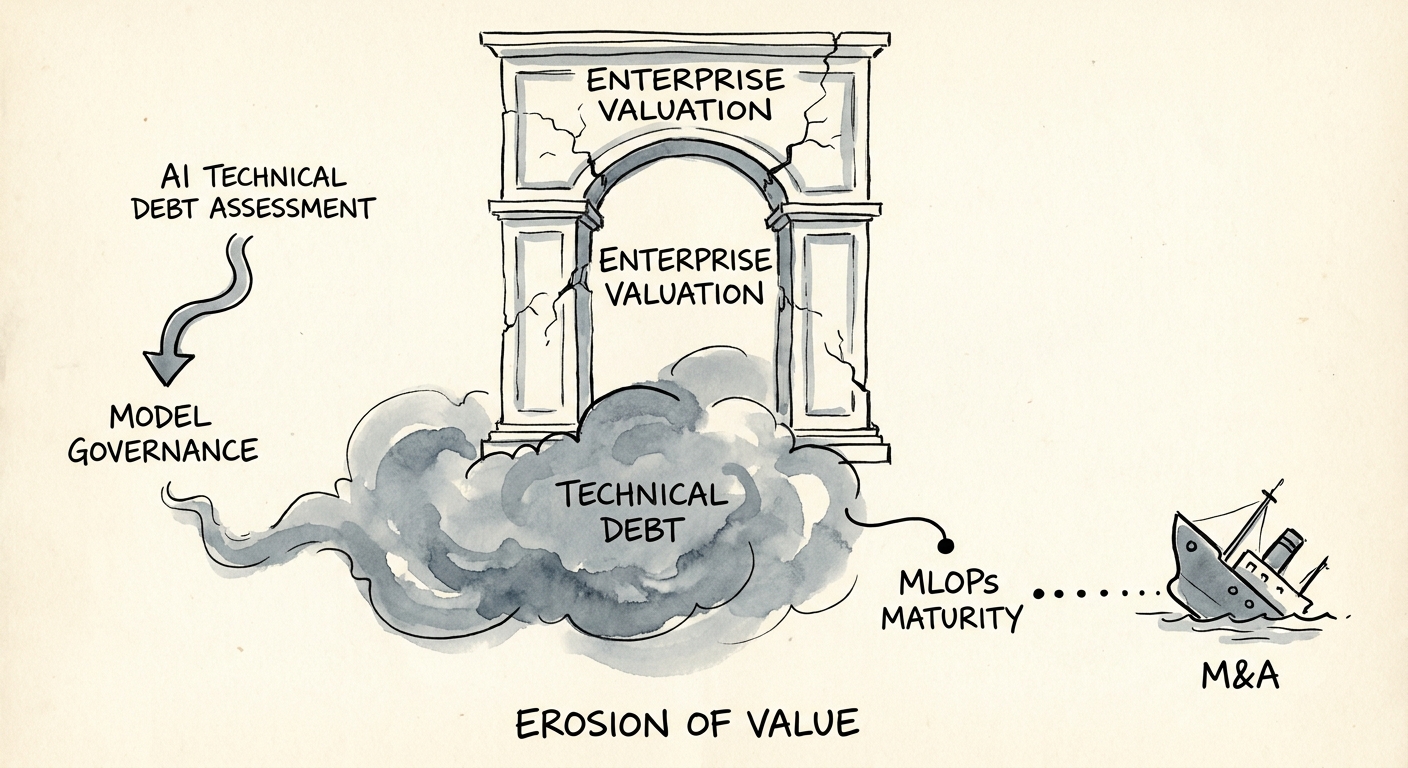

AI Technical Debt Assessment: Why Ungoverned Models Kill Deal Value

Discover why ungoverned AI models introduce massive technical debt. Learn how to assess MLOps maturity, model drift, and governance during M&A due diligence.

400% Maintenance vs. Development Cost Ratio for Ungoverned AI

BRIEF · TECHNICAL DEBT

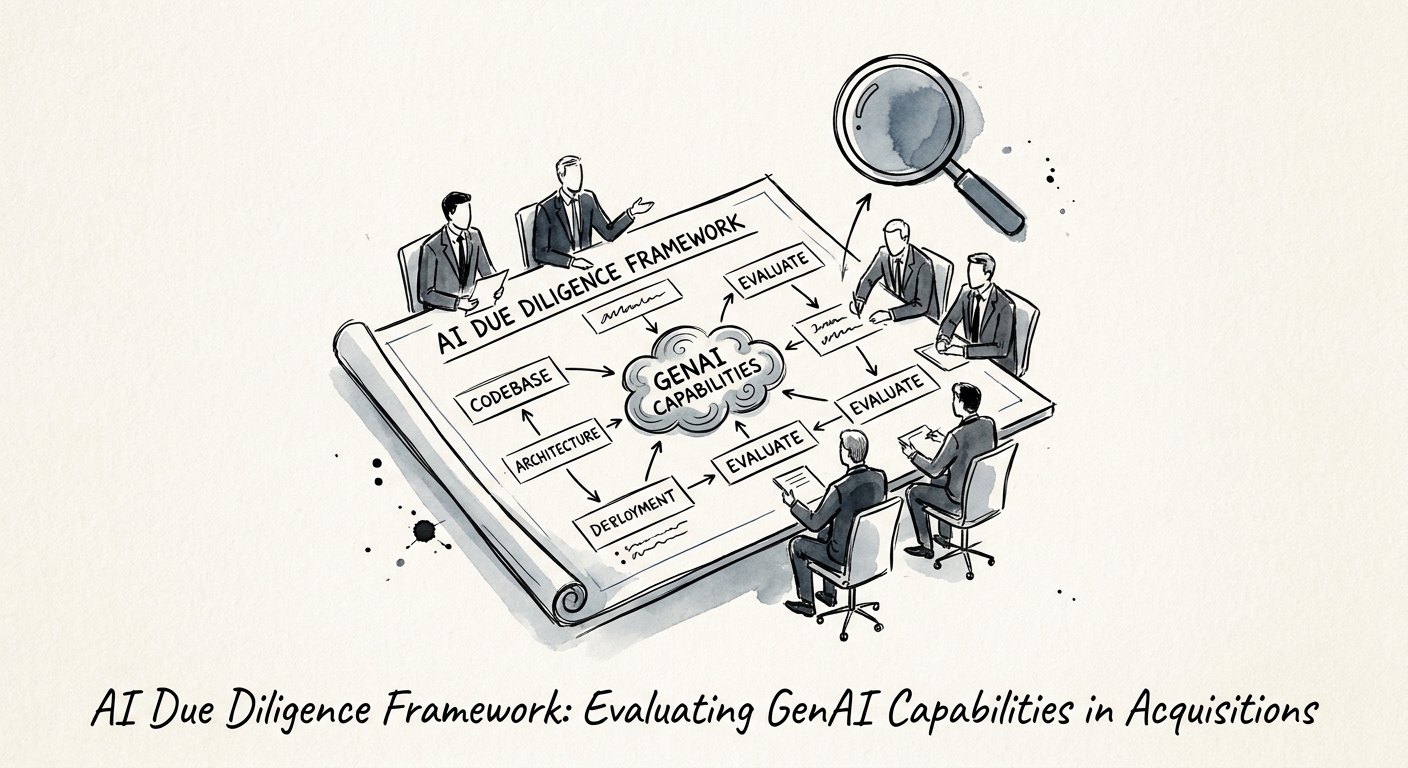

AI Due Diligence Framework: Evaluating GenAI Capabilities in Acquisitions

A 2026 diagnostic framework for private equity operating partners to evaluate GenAI capabilities, identify shadow AI risks, and quantify technical debt in tech M&A.

95% GenAI Pilot Failure Rate

BRIEF · TECHNICAL DEBT

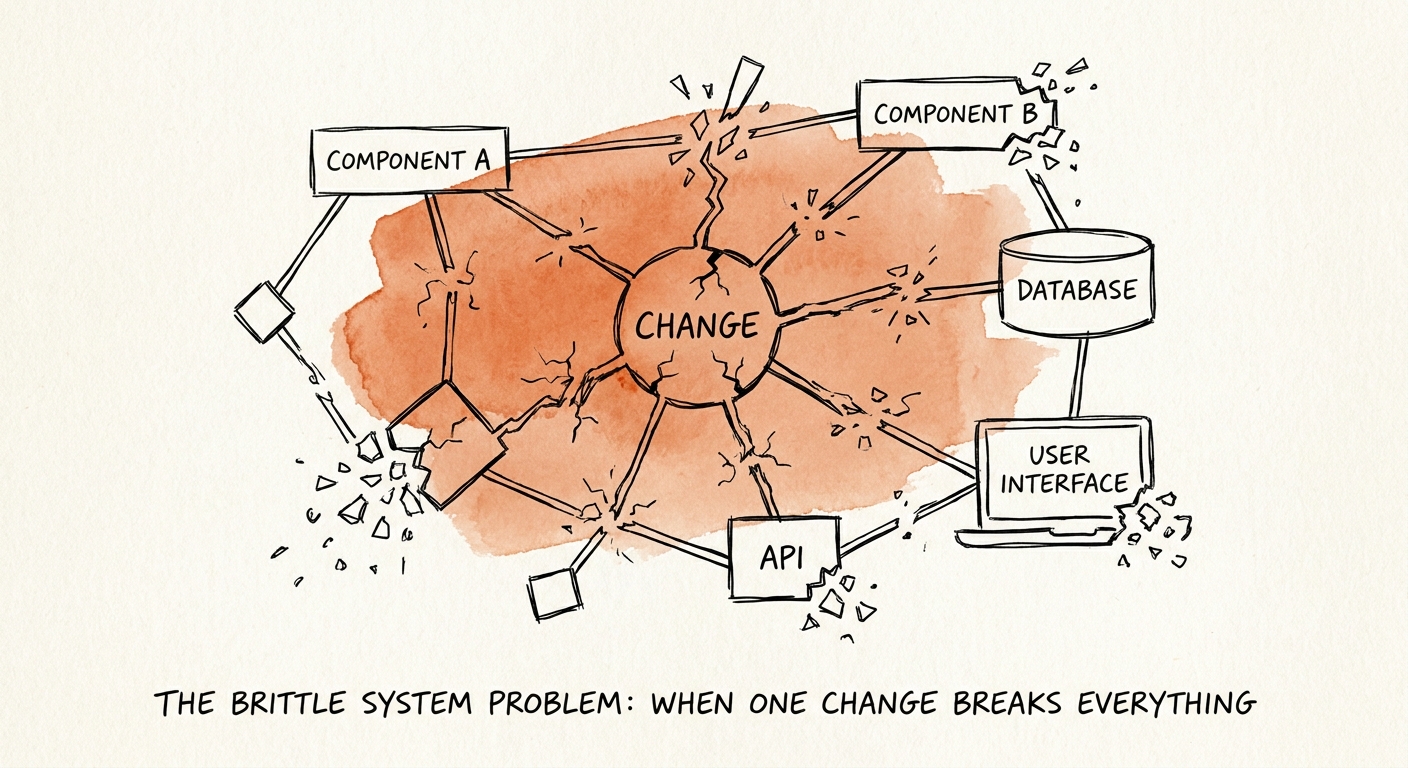

The Brittle System Problem: When One Change Breaks Everything

Discover why brittle software systems and tightly coupled architectures trigger 22% M&A valuation discounts and how PE operators can decouple legacy code.

22% M&A Valuation Discount Applied to Brittle Architectures

BRIEF · TECHNICAL DEBT

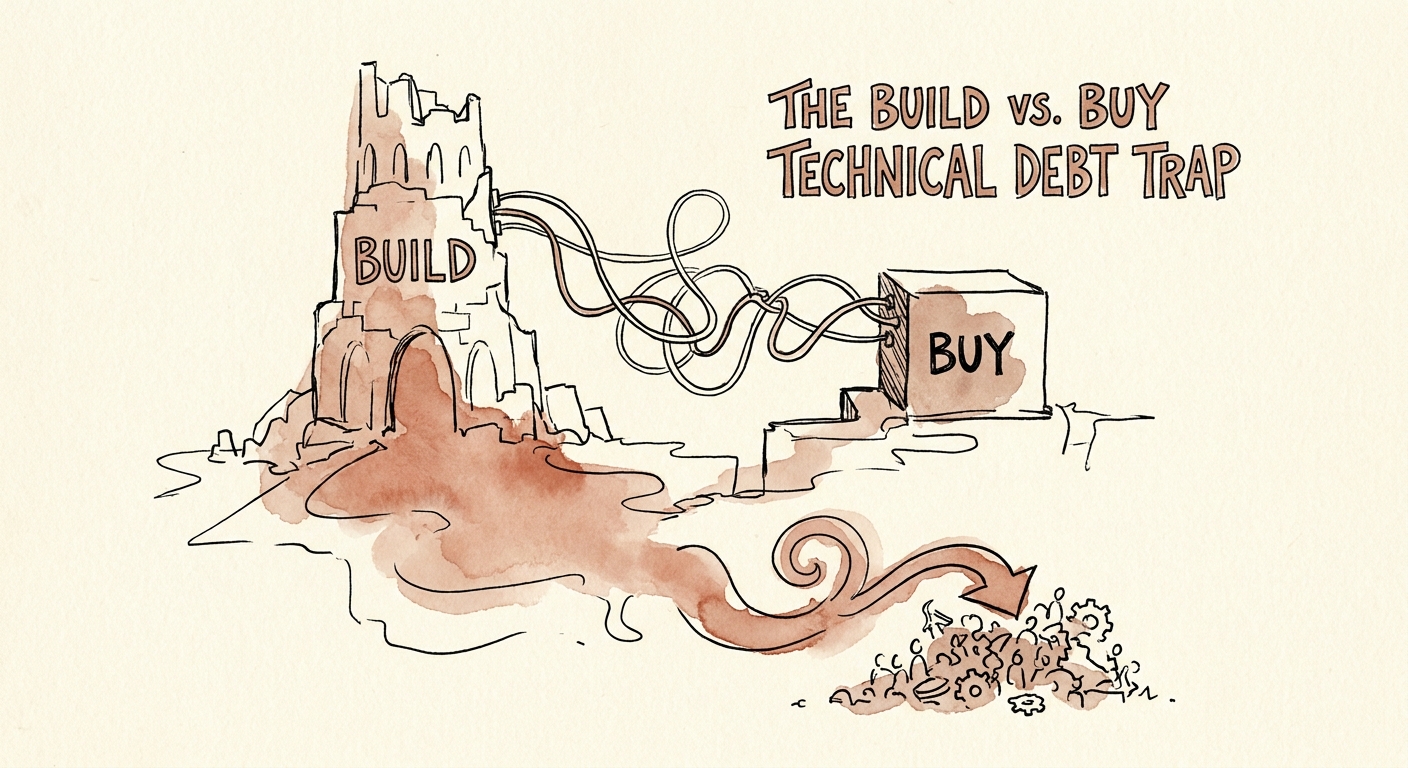

The Build vs. Buy Technical Debt Trap: When Custom Development Becomes a Burden

When custom development becomes a burden. Learn how the build vs. buy technical debt trap bleeds engineering capacity and destroys M&A valuations.

34% Engineering Capacity Lost to Custom Tool Maintenance