Private equity sponsors are currently paying 12x revenue multiples for "proprietary AI capabilities" that are actually just fragile API wrappers destined to vaporize 40% of their gross margins when the underlying vendor adjusts their pricing. The software industry has spent the last decade optimizing for zero marginal costs, building high-margin empires on predictable AWS and Azure infrastructure. But the generative AI era has violently reintroduced variable cost of goods sold (COGS) into the SaaS P&L. Yet, during M&A due diligence, buyers are still evaluating these target companies as if LLM token consumption is a predictable rounding error rather than a fundamental, existential threat to unit economics.

In our last engagement leading technical due diligence for a $250M SaaS carve-out, I had to explain to the investment committee that the target's heavily marketed "flagship AI copilot" was nothing more than a brittle prompt chain tied exclusively to a single third-party LLM endpoint. We modeled their projected user adoption against the raw compute required for inference, and the financial results were disastrous. The vaunted 82% software gross margin collapsed to 47% within four quarters of scaled rollout. This is not an isolated incident; it is the new standard of technical debt. Bain's 2026 analysis of SaaS unit economics under AI adoption revealed that while AI initiatives drove a 38% revenue increase for one marketing tech firm, the variable costs of AI infrastructure and hosting skyrocketed by an astonishing 349%. You simply cannot scale a profitable business when your underlying compute costs multiply tenfold against your revenue growth.

The core issue is that founders are prioritizing speed-to-market over architectural resilience. They build core product features directly on top of vendor APIs without any abstraction layer, effectively handing total pricing power and margin control over to their foundational model provider. Gartner's 2026 forecast on Generative AI costs projects that through 2028, the aggregated costs of model inference will account for at least 70% of a generative AI model's total lifetime cost. If you do not own the inference layer, or at least have the architectural flexibility to aggressively negotiate and route traffic between competing open-source and proprietary providers, you do not own your intellectual property. You are simply reselling another company's compute at a severe structural disadvantage.

The Silent Killers: Model Drift and Vendor Deprecation

Financial exposure to inference costs is only the first dimension of AI vendor dependency. The operational reality of these API wrappers is far more volatile. When a SaaS company hardcodes its enterprise application to a specific model version from OpenAI or Anthropic, it inadvertently inherits that vendor's product roadmap, deprecation schedule, and hidden algorithmic changes. We call this phenomenon "model drift," and it is the silent killer of enterprise AI features. A complex prompt chain that executes a perfectly formatted JSON output in March can suddenly hallucinate or refuse to answer entirely in April, simply because the foundational vendor quietly optimized their model's safety guardrails in the background.

Building production-grade software on top of shifting sand requires heavy orchestration, but most mid-market engineering teams lack the discipline to build proper LLMOps pipelines. MIT Sloan Management Review's study on AI pilot scaling found that up to 85% of AI projects never move beyond the pilot phase, largely because single-model dependencies inevitably fall victim to this model drift, unannounced API deprecations, and a total lack of reliable testing infrastructure. You cannot build a deterministic business process on top of a non-deterministic black box without implementing an aggressive, heavily monitored governance architecture.

Despite the market hype surrounding autonomous agents, the tangible business value generated by these tightly coupled API wrappers remains shockingly low across the enterprise. PwC's 2026 Global CEO Survey on AI outcomes revealed that 56% of chief executives report their AI initiatives have produced neither increased revenue nor decreased costs. The reason is blindingly simple: when an application breaks every time the underlying foundational model receives a backend update, customer success teams end up spending more time apologizing for hallucinated data than users spend gaining actual value from the tool. This is a severe, modern form of technical liability that directly impacts net revenue retention, and the technology due diligence red flags that kill deals frequently center on this exact architectural vulnerability.

Re-Architecting Due Diligence for the GenAI Era

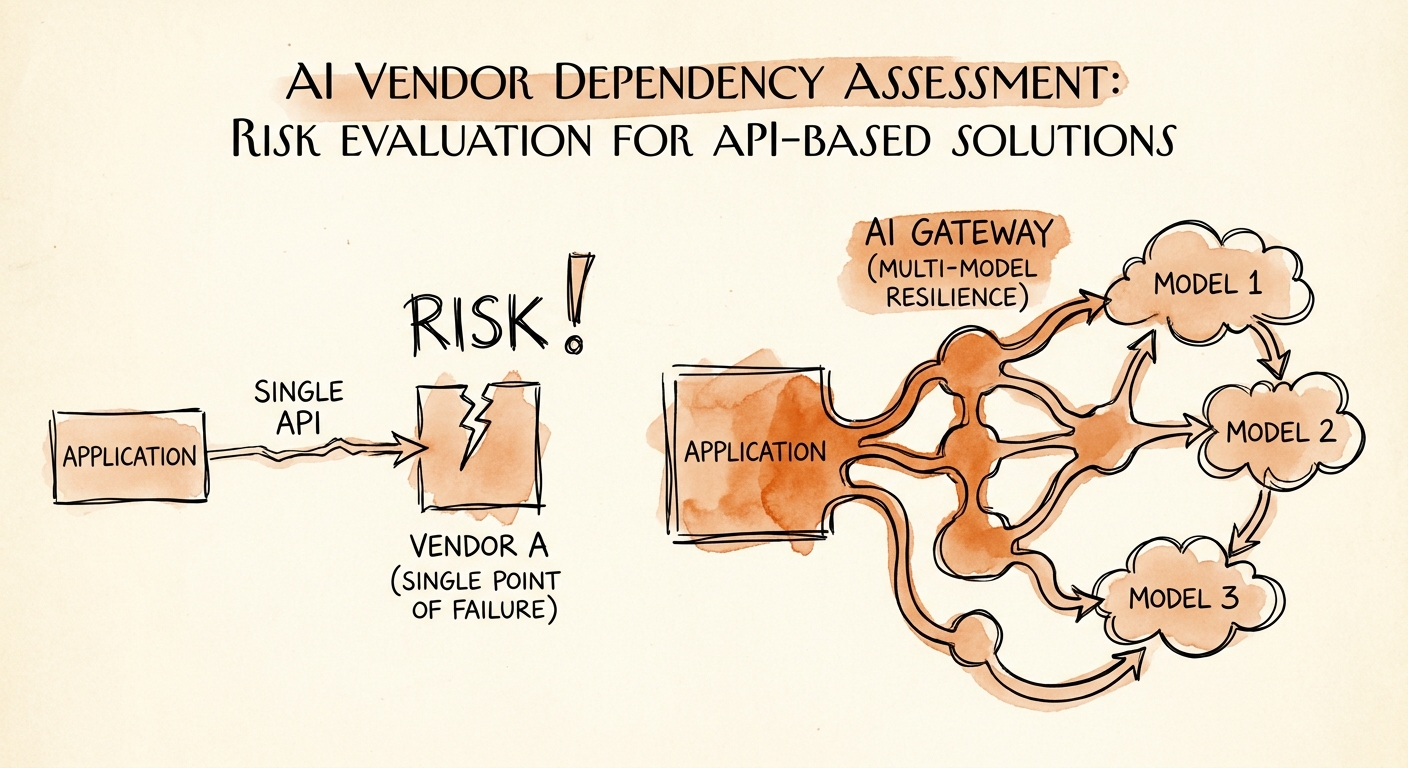

Evaluating an AI-enabled acquisition target requires a total overhaul of the traditional software due diligence playbook. You can no longer just run a static code analysis, review open-source licenses, ensure SOC 2 compliance, and call it a day. Today, you must ruthlessly interrogate the architecture's portability. If an application cannot seamlessly swap its foundational LLM provider within 48 hours without rewriting the core application logic, the company is fundamentally un-investable at a premium multiple. This lack of agility is a massive blind spot for private equity buyers. McKinsey's recent evaluation of M&A due diligence performance found that diligence was deemed completely inadequate in more than 40% of transactions, a failure rate that is poised to accelerate aggressively as API-dependent targets flood the M&A market.

To protect your exit valuation and preserve EBITDA, portfolio operating partners must mandate what we call an "AI Gateway" architecture. This crucial abstraction layer routes requests dynamically between multiple models—OpenAI, Anthropic, Gemini, or self-hosted open-source models—based on the specific cost, latency, and compliance requirements of the task at hand. Need to parse internal legal documents securely? The gateway routes it to a self-hosted Llama 3 instance for pennies. Need complex, multi-step logical reasoning? It routes to the premium commercial API. This intelligent routing is the only mathematical way to decouple your product's unit economics from your vendor's margin requirements.

Before signing a letter of intent in 2026, sponsors must demand a comprehensive map of all external AI dependencies from the target's CTO. Require the management team to physically demonstrate their prompt version control systems, their automatic fallback mechanisms for API rate limits, and their automated unit tests designed specifically for catching model drift. If they look at you blankly or hand you a slide deck about "our deep OpenAI partnership," you are about to acquire an expensive proof-of-concept disguised as a mature software product. Stop valuing third-party vendor dependencies as proprietary intellectual property, or you will be left holding the bag when the COGS reality finally sets in. Use our technology due diligence checklist for software acquisitions to force these uncomfortable conversations early, and ensure your investment is built on sustainable engineering architecture rather than rented compute.