The Illusion of the Turnkey Algorithm

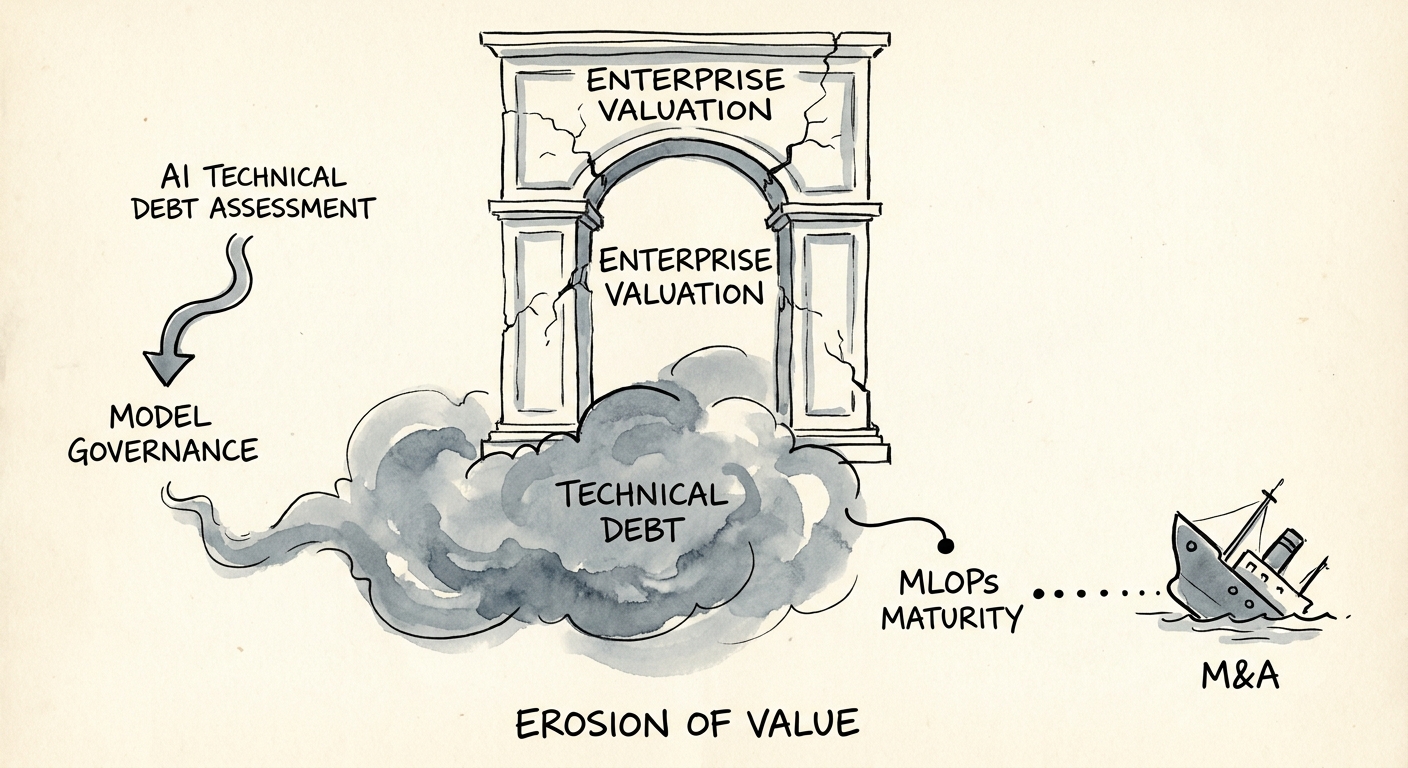

Private equity buyers are routinely paying a 14x premium for AI-enabled startups, completely blind to the reality that McKinsey's 2025 Global Survey on AI reveals maintenance costs for ungoverned predictive models consume up to 400% of their original development budget within the first twelve months. You are not buying a finished asset; you are adopting a volatile, living system that degrades the moment it touches live data. Standard technology due diligence treats machine learning models like static codebases. This is a fatal underwriting error. Software code is deterministic. A machine learning model is probabilistic, relying entirely on the statistical distribution of the data feeding it. When that data changes, the model silently rots.

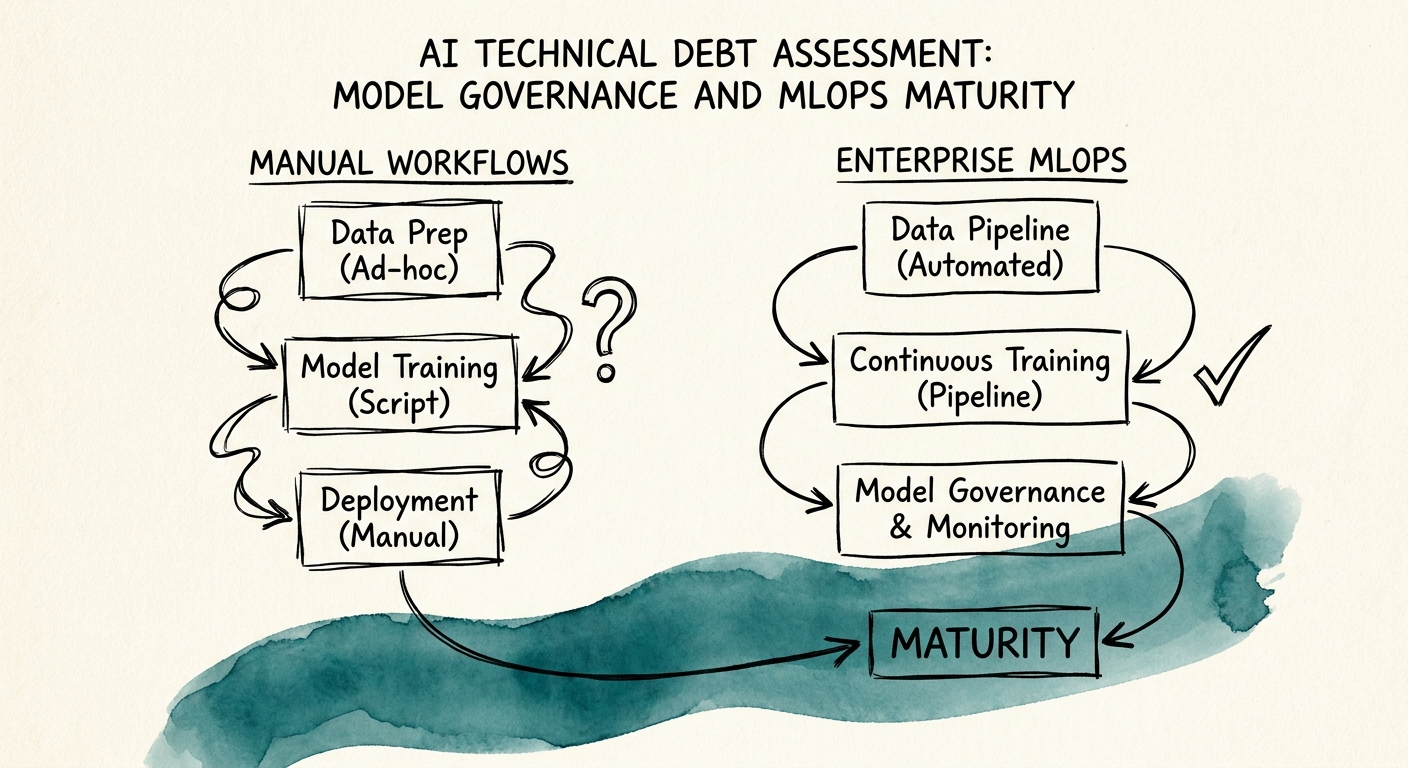

We see deal teams rely on legacy code quality scans, assuming high test coverage means the technology stack is sound. This completely misses the epicenter of AI technical debt. Traditional tech debt is bad architecture or unrefactored code. AI technical debt is invisible in a GitHub repository. It exists in the tangled web of data pipelines, unversioned training sets, manual deployment scripts, and the complete lack of continuous integration and continuous deployment (CI/CD) for machine learning workflows. Without these systems, you do not have an AI company; you have an expensive science experiment running in production.

In our last engagement assessing a $120M generative AI scale-up, I had to completely re-underwrite the sponsor's deal model just weeks before close. The target boasted 45 models in production, but they had zero automated retraining pipelines and absolutely no centralized model registry. They were literally tracking model versions and data drift metrics via shared spreadsheets. We uncovered a $4.2M hidden CapEx requirement just to build the basic MLOps infrastructure they needed to survive the next twelve months of enterprise scale. If you miss this in diligence, you will pay for it out of your own EBITDA margin.

This systemic lack of maturity cripples engineering velocity. MIT Sloan's 2024 Research on ML Technical Debt proves that without mature MLOps pipelines, data science teams spend up to 70% of their operational capacity fixing degraded models and managing infrastructure rather than building new intellectual property. When assessing a target, evaluating this dynamic is critical. Refer to our 10 Red Flags in Technology Due Diligence That Kill Deals for a broader view, but recognize that AI technical debt requires a completely different investigative lens.

Model Governance is a Financial Imperative

If you acquire an AI platform without robust model governance, you are acquiring an unquantified liability. The fundamental law of machine learning is entropy. Models do not age gracefully. Gartner's 2025 AI Engineering Maturity Benchmark shows that 64% of predictive models suffer significant performance degradation—known as "model drift"—within 90 days of deployment if left unmonitored. When a predictive pricing model drifts, it actively misprices your product. When a risk-scoring algorithm drifts, you absorb bad debt. The financial consequences of ungoverned AI hit the P&L immediately.

The core issue stems from the "Hero Data Scientist" anti-pattern. Early-stage AI companies rely on brilliant individuals who manually train, tweak, and deploy models from their local machines or bespoke Jupyter notebooks. There is no reproducible pipeline. If that data scientist leaves the firm, the model becomes a black box that no one else can update. This extreme key-person dependency is the definition of unscalable technical debt, and it destroys enterprise value during the hold period.

Acquirers routinely underestimate the sheer cost of operationalizing these bespoke algorithms. EY's 2025 GenAI Investment Framework demonstrates that post-acquisition AI integration costs exceed initial CIM estimates by an average of 45%, driven almost entirely by the target's lack of MLOps maturity. Buyers think they are acquiring the algorithm's intelligence, but they fail to verify if they are acquiring the factory required to sustain that intelligence. You must calculate the cost to build that factory before you agree to a purchase price.

To accurately price this risk, operating partners must map the target's maturity against a rigid framework. We strictly enforce a dollar-value translation of these operational gaps. You can use our Technical Debt Quantification Framework: From Assessment to Dollar Value to model the precise CapEx and OpEx required to drag a chaotic data science team into enterprise-grade MLOps maturity. If the target lacks automated drift detection, add $500k to your remediation budget immediately.

The Due Diligence MLOps Scorecard

To underwrite an AI acquisition effectively, you must audit the MLOps pipeline with the same rigor you apply to GAAP financials. A mature MLOps environment treats data, models, and code as equally versioned, auditable assets. We demand to see a centralized model registry—the system of record detailing exactly which model version is running in production, the specific data snapshot it was trained on, and the hyperparameters used to tune it. Without a model registry, the target is operating entirely blind. You cannot govern what you cannot track.

Furthermore, enterprise buyers and regulators are aggressively changing the market standards. PwC's 2026 AI Trust and Governance Survey indicates that 82% of enterprise acquirers now demand a formal, third-party model governance audit before signing an LOI for any AI-native business. This means if you buy a company with immature MLOps today, you will not be able to sell it tomorrow. Future acquirers will aggressively discount your valuation if your models are unexplainable, biased, or non-compliant with emerging frameworks like the EU AI Act.

We evaluate targets against three non-negotiable MLOps pillars. First, Continuous Training (CT): the system must automatically trigger a retraining pipeline when performance metrics drop below a defined threshold. Second, Feature Stores: centralized repositories that guarantee the features used for offline training exactly match the features served for real-time inference. Third, Shadow Deployments: the ability to run a new challenger model alongside the live champion model without impacting the end user, comparing outputs before routing live traffic. If the target lacks these pillars, their ARR is resting on a fragile foundation.

You cannot buy AI without buying MLOps. Treat the absence of model governance not as a post-close operational improvement, but as a critical valuation defect. Uncover the liabilities buried in the algorithms before the seller hands you the keys to a depreciating asset. For a comprehensive look at the legal and structural risks inherent in these models, review our Intellectual Property Audit Checklist for AI/ML Acquisitions: The "Poisoned Model" Risk. Price the debt, fund the remediation, and build the factory.