Enterprise AI initiatives that launch without a formally documented Center of Excellence (CoE) burn up to 70% of their budget trapped in pilot purgatory, generating zero production revenue. I have rebuilt this team structure three times in the past year alone for mid-market portfolio companies, and the diagnostic is always identical. Executives assume building enterprise AI capabilities is simply a matter of hiring machine learning engineers and buying compute. It is not. Enterprise AI is fundamentally a process documentation and governance challenge, and treating it as a pure software development exercise is why your margins are collapsing.

In our last engagement with a $45M SaaS platform, the CEO proudly showed me six different generative AI features scattered across their product suite. None of them were governed by a centralized playbook. Engineering teams were duplicating data cleansing efforts, utilizing conflicting model evaluation rubrics, and exposing the company to massive compliance risks. When we implemented a formal AI CoE, we didn't write a single line of code. We wrote standard operating procedures. The data backs this brutal reality. According to Gartner's 2024 AI Scaling and Governance Benchmark, organizations lacking centralized, documented AI governance frameworks see 70% of their models fail to cross the chasm from prototype to production. You are not scaling AI; you are funding a very expensive science fair.

We mandate that every client transitioning from ad-hoc AI features to true enterprise capability establish a CoE before their next board meeting. A proper CoE dictates exactly how data lineage is tracked, how models are audited for bias, and how intellectual property is ring-fenced. This creates a repeatable factory for deployment. McKinsey's 2024 State of AI Report quantifies this operational leverage, revealing that organizations with a documented, cross-functional Center of Excellence capture 2.5x more financial value from their AI investments than their decentralized peers. If you are preparing for a liquidity event in the next 24 months, your buyer will explicitly audit this architecture.

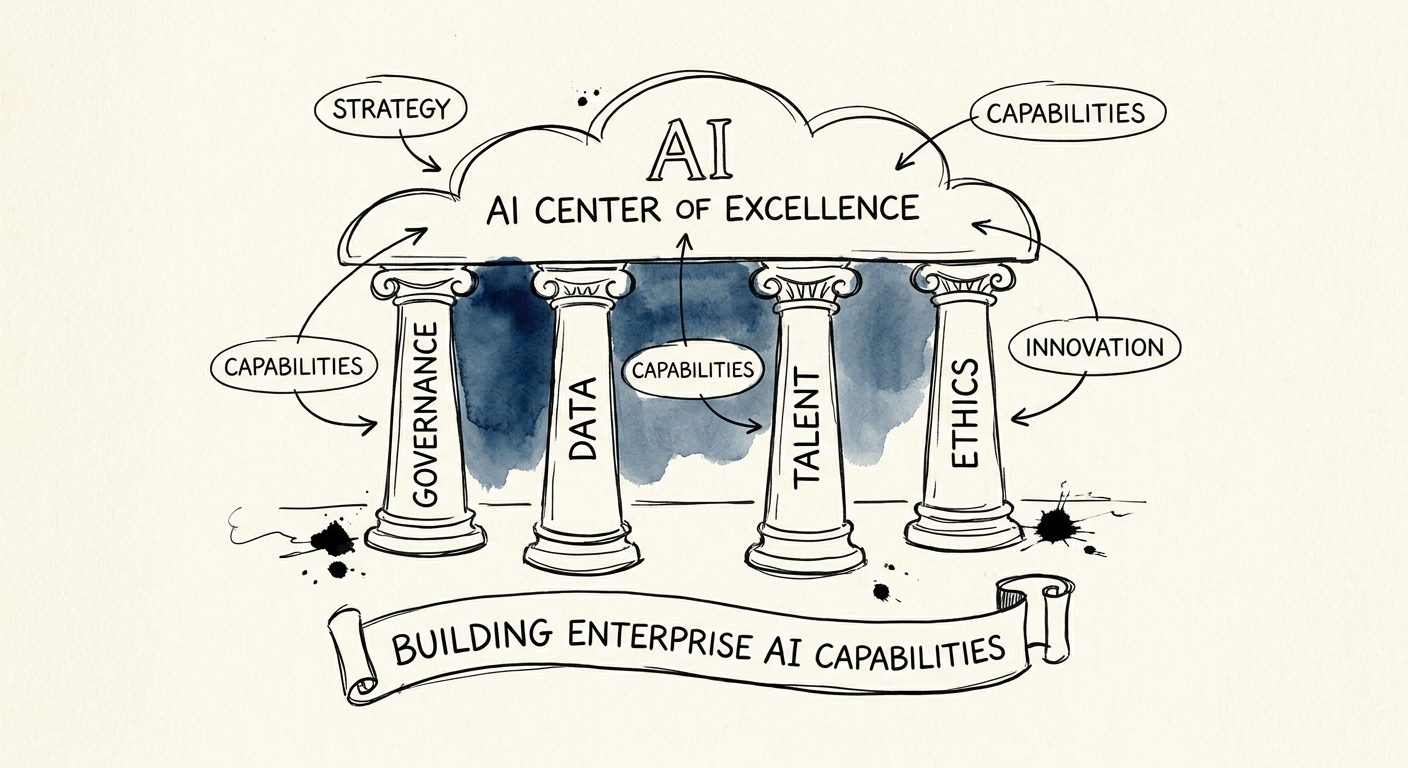

The Architecture of an Exit-Ready AI Center of Excellence

The hallmark of a mature AI CoE is relentless, unapologetic process documentation. We structure these centers around three non-negotiable pillars: Data Lineage Protocols, Model Evaluation Standardization, and Continuous Compliance Auditing. If your technical lead cannot point to a master repository detailing these three workflows, you do not have a CoE. You have a shadow IT department building unscalable technical debt. We systematically focus on prioritizing technical debt remediation by forcing engineering teams to write down exactly how they ingest, transform, and store the data feeding their language models.

First, data lineage protocols must be bulletproof. When private equity buyers audit your AI capabilities, their first question is never about model accuracy; it is about data provenance. Can you prove you have the legal right to train your models on the underlying datasets? MIT Sloan's Research on AI Centers of Excellence demonstrates that standardized, strictly documented data ingestion processes reduce overall model deployment times by 45%, simply because developers stop reinventing the compliance wheel. We force our portfolio companies to document every data source, transformation step, and access control policy before a single training run is authorized.

Second, your CoE must enforce standardized model evaluation. I see companies running disparate evaluation frameworks for every single feature, making it impossible for the C-suite to benchmark performance or ROI. Your CoE documentation must define the exact metrics—precision, recall, latency, and token cost—that determine whether a model is approved for production. The market is waking up to this deficit. PwC's 2024 AI Business Survey found that 68% of executives admit their current AI governance frameworks entirely lack the specific, documented operational procedures required to measure model ROI accurately. This lack of standardization is one of the most glaring technology due diligence red flags we see in the market today.

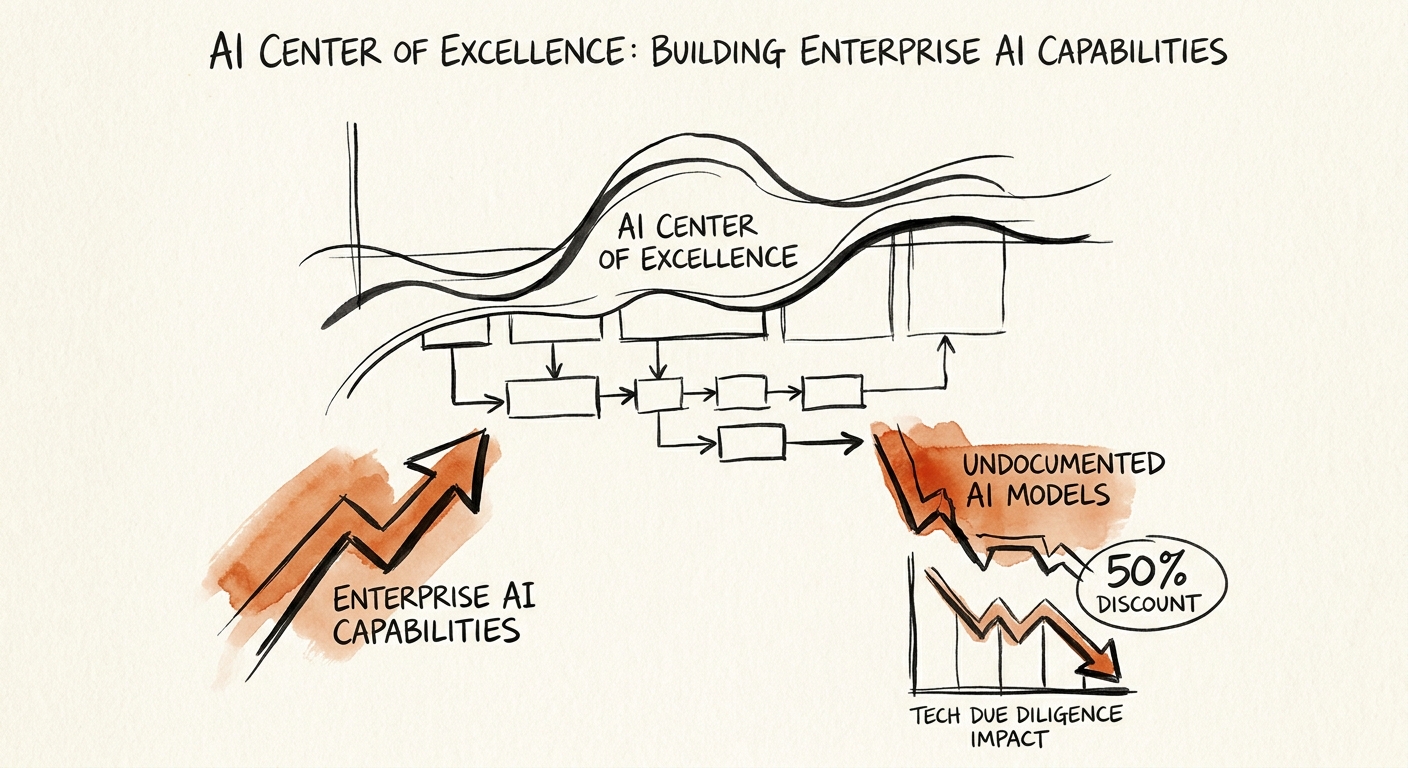

Translating Process into Valuation Multiples

Process documentation within your AI CoE is not a bureaucratic hurdle; it is a direct driver of your enterprise valuation. Buyers in the current M&A environment are terrified of inheriting rogue AI deployments. They are terrified of hallucination risks, copyright infringement, and unmonitored cloud consumption costs. A rigorously documented CoE acts as an insurance policy for your buyer, proving that your AI capabilities are systems-dependent rather than hero-dependent. When I audit a target company on behalf of a sponsor, the presence of a mature CoE immediately shifts the narrative from risk mitigation to revenue expansion.

The financial impact of this governance is stark. Without a centralized CoE governing your architecture, your proprietary models are heavily discounted during the transaction. According to EY's 2025 AI Scaling and Investment Study, AI initiatives lacking formalized process documentation and clear data lineage face up to a 50% valuation discount during technical due diligence. Buyers will simply strip the projected AI revenue out of the quality of earnings report if they cannot verify how the sausage is made. This makes fulfilling intellectual property documentation requirements not just a legal exercise, but a core component of your exit strategy.

We tell founders and operating partners the same thing: Stop treating AI as an R&D experiment. Build the Center of Excellence. Document the ingestion pipelines. Standardize the deployment checklists. If you build the operational scaffolding first, the artificial intelligence will scale profitably. If you skip the documentation and jump straight to the deployment, you will build a sophisticated cash incinerator that detonates the moment a buyer looks under the hood.