In our last engagement, a founder proudly presented a 94% code coverage metric in their technical data room—and it cost them a 1.5x turn on their EBITDA multiple. The buyer's technical operating partner didn't see engineering excellence; they saw a development team that spent 30% of its capacity writing tests for trivial getter and setter functions while actively ignoring the complex payment processing module. Chasing absolute code coverage is a fast track to a valuation haircut because it masks the true fragility of your underlying architecture. When private equity firms conduct technical due diligence in 2026, we look past the vanity metrics to find the rot. The reality is that unquantified technical debt is a silent deal killer. According to McKinsey's 2024 Digital Transformation Report, a staggering 70% of software initiatives fail specifically due to unquantified technical debt buried deep within the source code.

We evaluate code coverage not as a badge of honor, but as a proxy for risk maturity. When I see a SaaS company pushing for 100% test coverage, I know immediately that their engineering culture prioritizes arbitrary KPIs over shipping tangible enterprise value. Conversely, anemic coverage indicates a codebase where a single developer's departure could bring operations to a halt. There is a precise window of pragmatic testing that separates the premium-valued platforms from the integration nightmares. To understand where your target stands, you have to look at the macroeconomic cost of neglected codebases. The Consortium for Information & Software Quality (CISQ)'s Technical Debt Index reveals that the cost of poor software quality and technical debt in the US alone has ballooned to $2.41 trillion. When PE firms underwrite a deal, they are actively hunting for their share of that $2.41 trillion liability. Every percentage point of missing critical test coverage is a dollar deducted from the purchase price, cleanly reclassified as future remediation CapEx.

The Real M&A Benchmarks: Red Lines and Green Lights

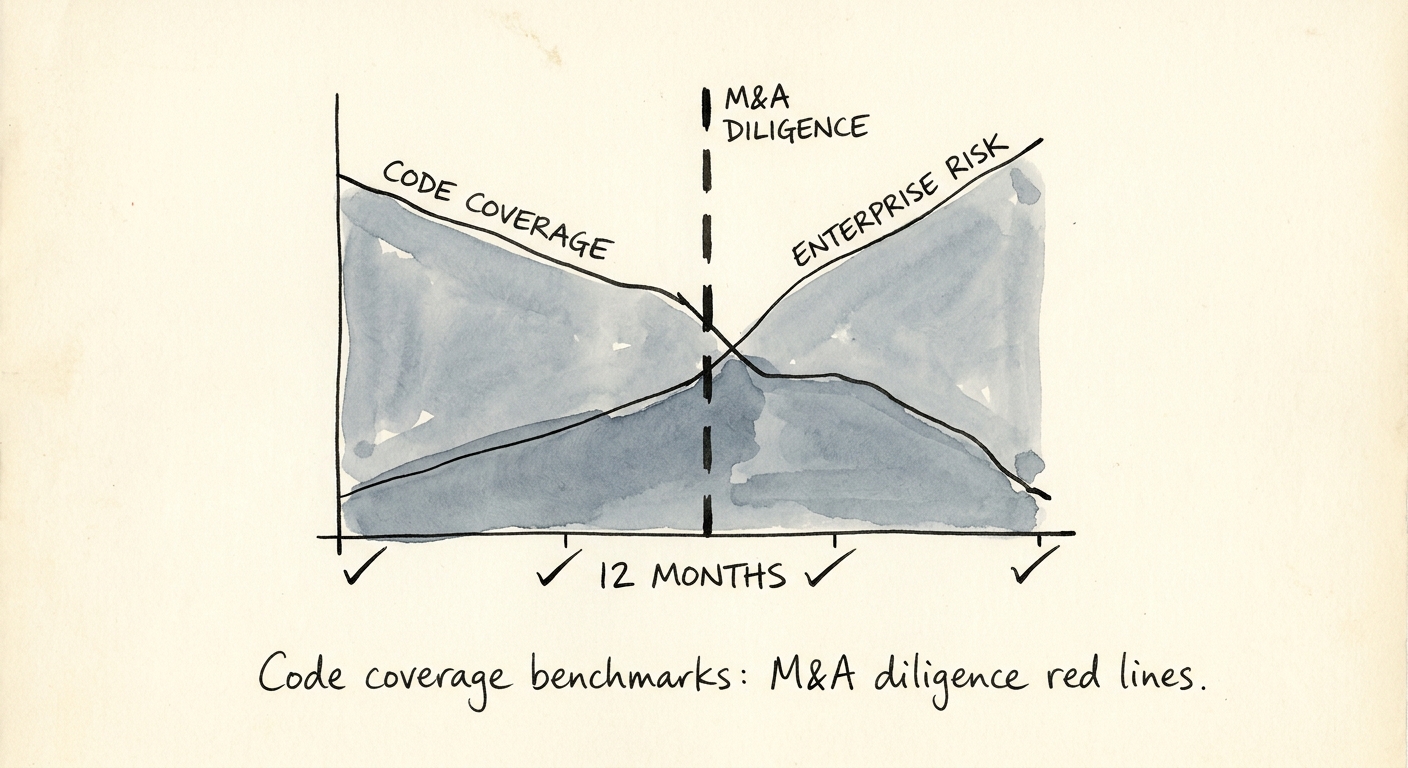

There are specific code coverage benchmarks that act as rigid red lines during the 30-day technical diligence sprint. Our data shows that anything below 40% line coverage is an immediate deal red flag. A sub-40% metric means the application is entirely dependent on manual QA, leading to ballooning defect rates and glacial deployment cycles. Acquirers will immediately model the cost of an automated test retrofit, typically reducing the enterprise value by $1.5M to $3M depending on the codebase size.

The diligence sweet spot for enterprise SaaS lies strictly between 60% and 75%. In this range, teams demonstrate pragmatic risk management. As noted in LTS Group's 2025 Tech Debt Benchmark Guide, a target of 70% coverage is widely accepted as the optimal balance between system reliability and feature velocity. We want to see comprehensive automated coverage on the core business logic, billing engines, and authentication pathways, while UI layers and third-party integrations are managed through targeted end-to-end tests. If you are preparing for market, you must stop the grand rewrite and focus your limited engineering capacity on retrofitting tests onto your highest-risk modules.

Anything pushing past 85% is the "Vanity Metric Danger Zone." The marginal utility of writing tests plummets after 80%. When we audit teams boasting 95% coverage, we invariably find brittle test suites that break on every minor UI tweak, effectively paralyzing the CI/CD pipeline. These teams suffer from massive "testing debt"—a hidden tax that artificially inflates engineering lead times. The market is rapidly losing patience with this architectural fragility. A recent Forrester Technical Debt Report highlights that 75% of technology executives expect their organization's technical debt to reach high severity levels by the end of 2026. Private equity buyers are weaponizing this statistic. They deploy automated scanning tools to expose test coverage gaps, leveraging those findings to negotiate heavy post-close escrows or immediate reductions in purchase price.

The 'Code Coverage Trend' Audit

Smart buyers have stopped looking at a single code coverage snapshot; instead, we audit the "Code Coverage Trend" over the trailing 12 months. An application sitting at 65% coverage but showing a steady 1% month-over-month decline is a distressed asset in the making. It proves the engineering team is shipping new features without writing corresponding tests, signaling a structural breakdown in DevOps discipline. Count.co's 2026 SaaS Coverage Trends dictates that healthy enterprise platforms must maintain a +0.5% to +2% positive monthly coverage trend to successfully outpace code decay.

In our experience leading a $150M tech-enabled services carve-out last year, we bypassed the top-line coverage score entirely and audited the test distribution map. The target's repository boasted 82% global line coverage, but the core revenue-recognition engine—the exact component driving the core investment thesis—had a terrifying 14% coverage. That single discovery changed the entire deal structure, converting an all-cash exit into a performance-weighted earnout. This is exactly why the complete technology due diligence checklist for software acquisitions strictly demands rigorous branch coverage analysis over standard line coverage.

To survive modern diligence, you must pivot from generic test metrics to strategic risk mitigation. Cyber vulnerabilities are directly correlated to untested edge cases in legacy codebases. As outlined in Bain & Company's 2026 Cybersecurity Outlook, organizations will need to double their current security and infrastructure spending to combat AI-enabled attacks actively exploiting these exact code gaps. Do not let unquantified testing debt destroy your exit. Map your test coverage precisely to your revenue-generating features, enforce strict branch coverage on your critical transaction paths, and treat your automated test suite as a Tier-1 financial asset.