The Invisible Balance Sheet

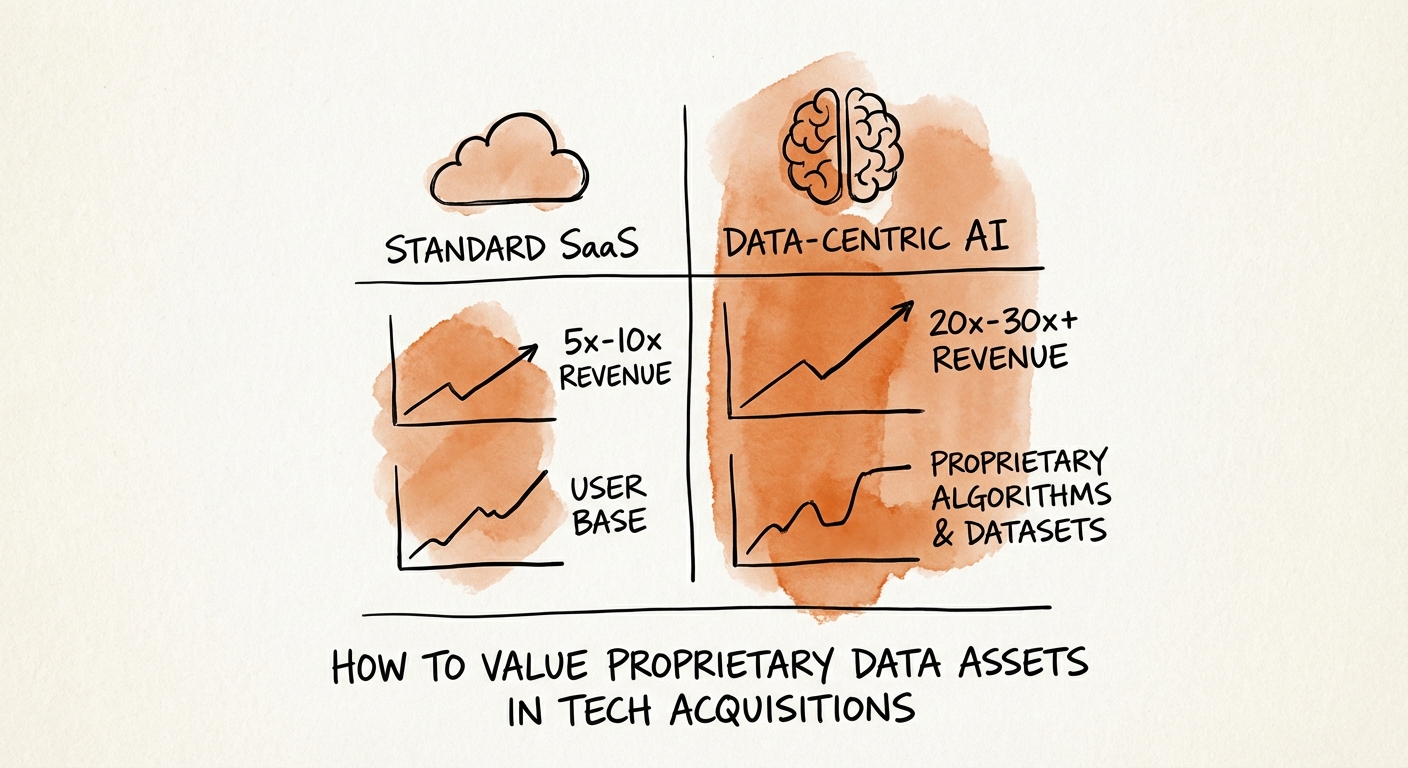

In 2026, the gap between a standard SaaS exit and a category-defining “data platform” exit is approximately 19 turns of revenue. According to 2025 M&A transaction data, standard horizontal SaaS companies traded at a median of 6.0x revenue, while companies with proprietary data assets and AI capabilities commanded an average multiple of 25.8x. This disparity reveals a fundamental shift in how private equity sponsors and strategic acquirers evaluate intellectual property.

For the last decade, “data” was treated as a byproduct of software workflow—digital exhaust stored in cold storage. Today, in the age of generative AI, that exhaust has become the fuel. Acquirers are no longer just buying your Annual Recurring Revenue (ARR); they are buying your training data. The valuation question has moved from “How much churn do you have?” to “How unique is your context window?”

However, not all data is an asset. Much of it is a liability in disguise (see: The Price of Compliance Gaps). To bridge the gap between a 6x and a 25x multiple, founders must prove their data is not just stored, but structured, scarce, and safe. The market is currently bifurcating into “workflow containers” (commoditized) and “intelligence systems” (premium).

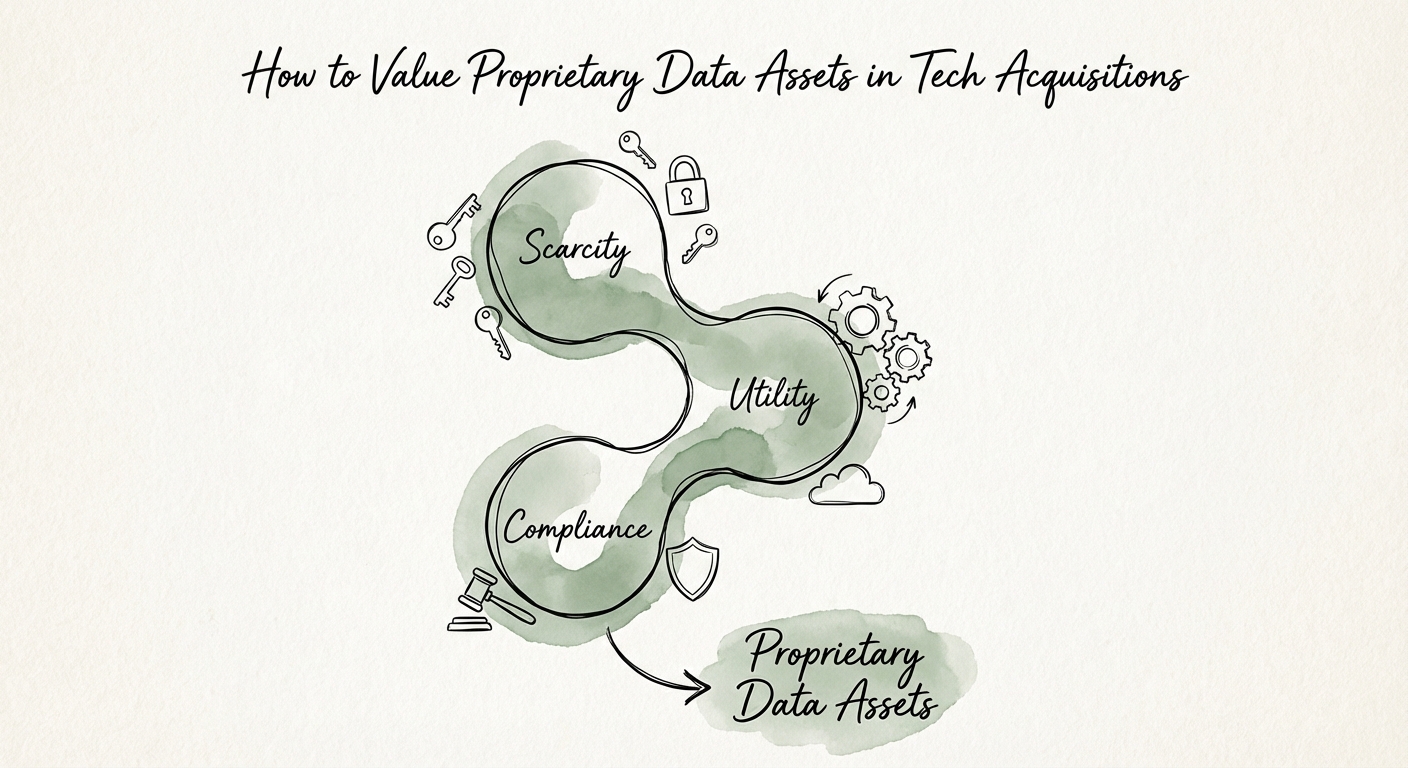

The 3-Pronged Data Valuation Framework

To defend a premium valuation, you must present your data asset through three specific lenses during due diligence. This is the framework sophisticated PE buyers use to determine if your data is a moat or a mirage.

1. Scarcity and Exclusivity (The “Alpha” Test)

Data that can be scraped from the public web is worth $0. Value accrues only to proprietary context that cannot be replicated by a generic Large Language Model (LLM). Metrics to track include:

- Unique Entity Records: The count of business objects (e.g., invoices, patient outcomes, supply chain nodes) that exist only in your system.

- Temporal Depth: AI models crave history. Five years of clean, longitudinal data on customer behavior is infinitely more valuable than a static snapshot.

- Feedback Loops: Evidence that your data gets better as customers use the product (see: The ‘Lakehouse’ Multiplier).

2. Utility and AI-Readiness (The “Structure” Test)

Buyers are now using AI agents to conduct diligence. If your data is locked in unstructured PDFs or siloed legacy databases, it is effectively invisible. A “Data Readiness Score” is becoming a standard diligence artifact. You must demonstrate:

- Normalization: Are fields standardized across the customer base?

- API Accessibility: Can the data be programmatically ingested for model fine-tuning?

- Metadata Richness: Is the data tagged with context (who, what, when, why)?

3. Provenance and Compliance (The “Poison Pill” Test)

The fastest way to kill a deal in 2026 is “commingling risk.” If your proprietary dataset includes scraped data, PII (Personally Identifiable Information) without consent, or third-party IP, it becomes a toxic asset. We break this down further in Intellectual Property Audit Checklist for AI/ML Acquisitions. You must provide a “Data Bill of Materials” proving the lineage of every record.

Calculating the “Data Premium”

How do you translate these qualitative factors into a quantitative valuation uplift? The “Income Approach” (specifically the Multi-Period Excess Earnings Method) is the gold standard for intangible assets, but for a pre-exit narrative, use the Revenue Quality Multiplier.

Start with the baseline multiple for your vertical (e.g., 6x for MarTech). Then, apply the following adjustments:

- +2 Turns for Structured Proprietary Data: If you have >1M unique, normalized records relevant to the industry.

- +4 Turns for “Model-Ready” Infrastructure: If you have a clean data lake (Snowflake/Databricks) with documented schemas (see: The AI/ML Expertise Premium).

- +6-10 Turns for “Predictive Revenue”: If you can prove your data creates a network effect where each new customer improves the product for everyone else.

The Trap: Many founders confuse “storage” with “value.” Storing terabytes of logs is a cost center. Curating terabytes of signals is a revenue generator. In your management presentation, move the “Data Asset” slide from the Appendix to the Executive Summary. Show the buyer that acquiring you is cheaper than spending 5 years trying to replicate your dataset.