The Silent Exfiltration of Your Intellectual Property

More than 40% of enterprises will suffer major security or compliance incidents linked to unauthorized shadow AI by 2030, according to Gartner's 2025 analysis of GenAI blind spots—a hidden liability that will instantly kill your next M&A deal. Every single day, your engineering, marketing, and sales teams are enthusiastically feeding proprietary source code, internal financial forecasts, and sensitive customer data into unsanctioned Large Language Models (LLMs). We see this pattern consistently: middle-market executive teams think they are 'innovating' by giving their workforce unchecked access to generative AI assistants, but they are actually building massive, irreparable compliance debt.

In our last engagement with a scaling software firm, we discovered that engineers were utilizing browser-based AI to debug proprietary algorithms, effectively handing core intellectual property to a public training set. I have rebuilt this entire security architecture three times in the last year alone after private equity buyers uncovered poisoned IP models during SaaS company due diligence. If you do not have a formal AI assistant governance framework in production today, your corporate data is bleeding out through browser extensions and open APIs right now.

It is no surprise that PwC's 2025 Global Digital Trust Insights Survey reveals 78% of organizations are frantically increasing their investments in generative AI governance. The smart operators realize data privacy and model governance are the fundamental architecture of modern enterprise technology. The naive assumption that your current security stack will catch these anomalies is bankrupting companies. Generative AI tools ingest unstructured context at massive scale. A single prompt can contain thousands of lines of sensitive internal dialogue. You cannot 'undo' a prompt once it hits the provider's servers.

The Catastrophic Cost of the Governance Void

Your employees are actively utilizing unauthorized browser plugins, embedding unsanctioned API keys into Slack integrations, and utilizing personal cloud environments to run sophisticated data models. This shadow AI ecosystem completely bypasses your legacy Data Loss Prevention (DLP) tools. We are not just talking about the risk of a simple data breach; we are looking at the systemic contamination of your business. When an employee pastes your unredacted customer list into an open model, that data becomes part of the public training set. This is exactly why a simple acceptable use memo from HR is entirely inadequate.

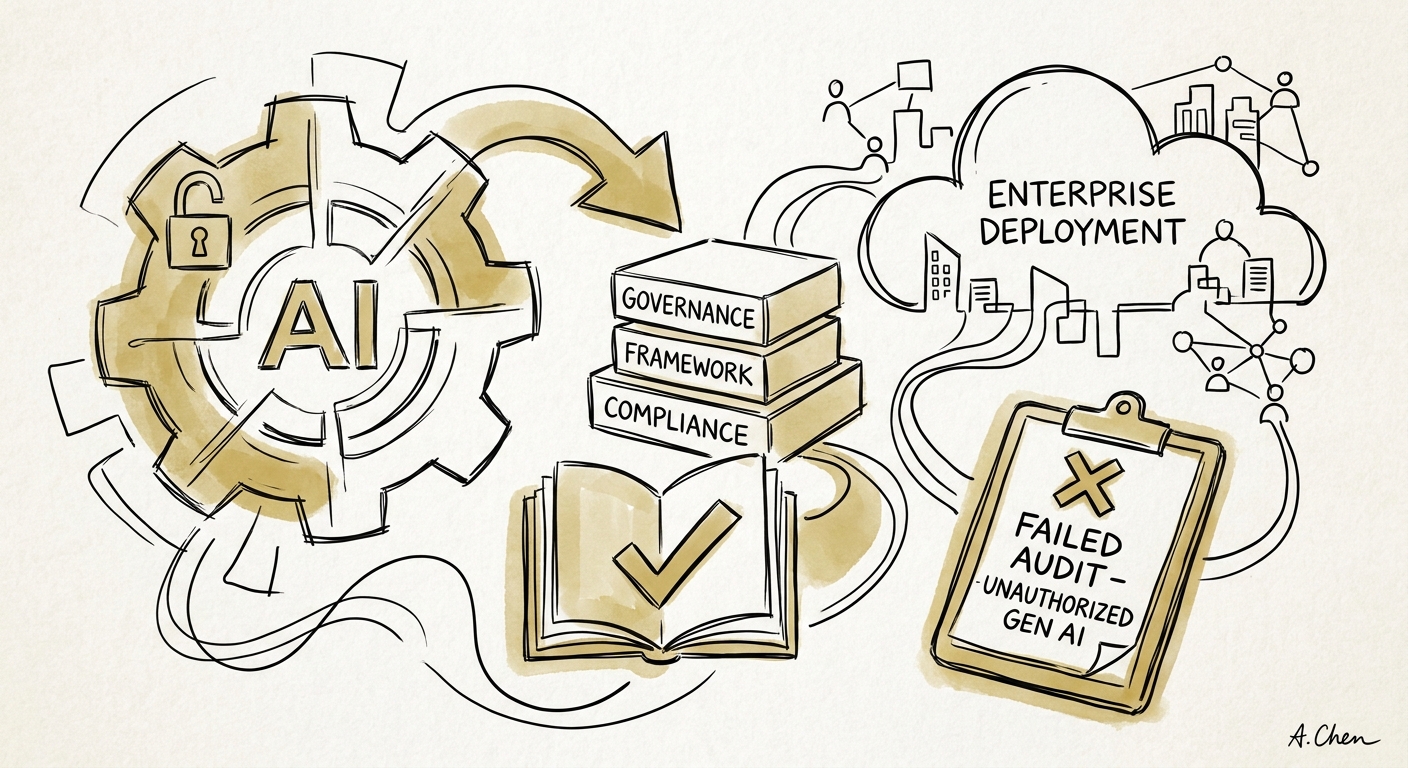

The financial cost of ignoring this paradigm shift is quantifiable and devastating. McKinsey's GenAI cybersecurity risk assessment indicates that while 53% of organizations recognize the severe cybersecurity threats posed by generative AI, a dismal 38% are actively taking steps to mitigate those risks. This 15-point execution gap is where enterprise value goes to die. I have watched buyers walk away from nine-figure acquisitions because the target company could not prove the provenance of their codebase, or worse, because their custom machine learning models were hopelessly contaminated with GPL-licensed code generated by an unmanaged AI assistant.

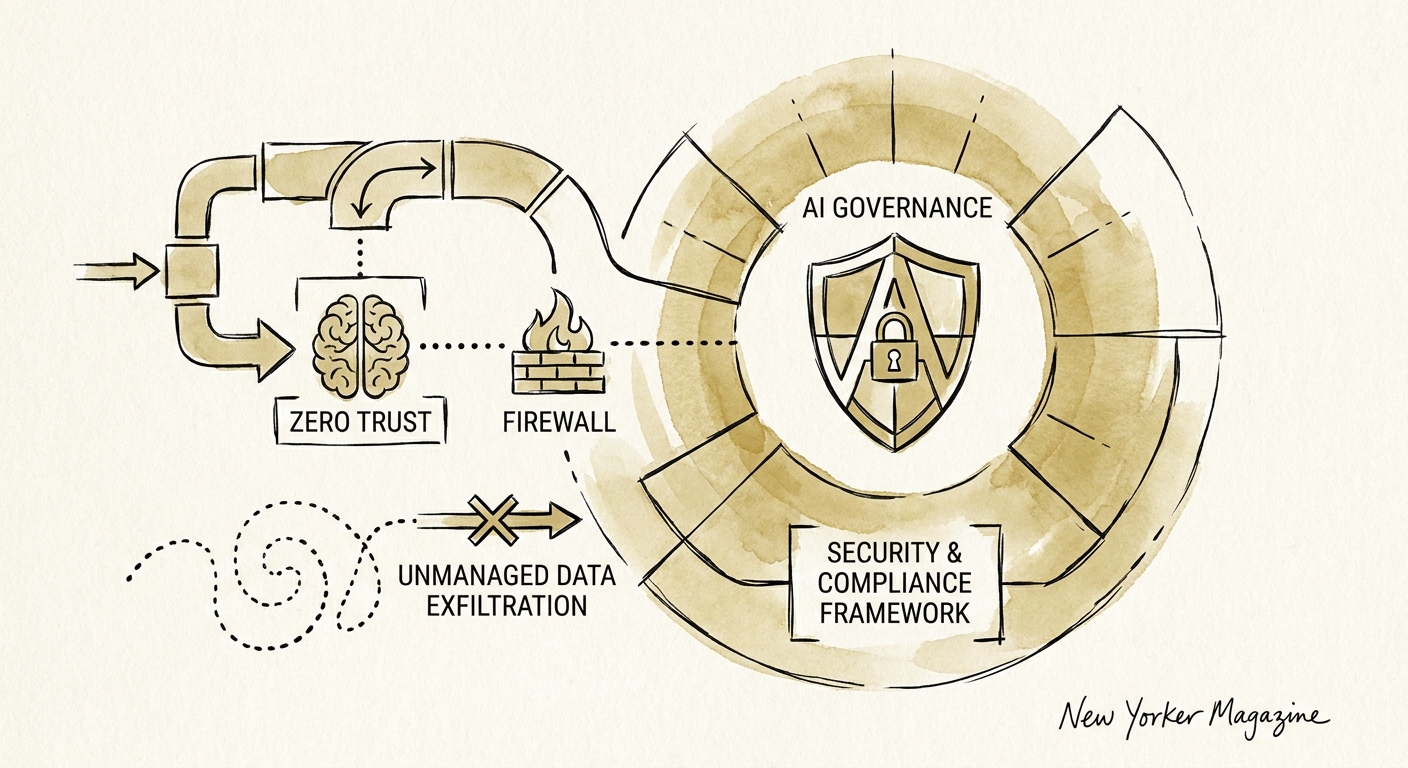

To stop this bleeding, you must implement a Zero Trust AI architecture. This means deploying enterprise-grade, sandboxed AI environments where data egress is strictly monitored. According to Forrester's 2025 enterprise AI adoption report, 67% of AI decision-makers plan to increase AI investments, but 29% identify lack of trust as the absolute primary barrier. You need an AI gateway that sits between your employees and the LLM providers, acting as an intelligent proxy that redacts sensitive information in real-time. Your governance model must enforce compliance rules dynamically, blocking prompts that contain proprietary keywords or financial thresholds.

Executing the Zero Trust AI Playbook

You cannot build trust without an ironclad governance policy that maps directly to your technology risk oversight strategy. Your board needs to see a live dashboard that tracks AI utilization, flags anomalous data transfers, and proves continuous compliance with frameworks like the EU AI Act. The execution of this governance framework strictly separates the scalable platforms from the unsellable liabilities.

We instituted a strict registry system in our portfolio companies, mandating that every AI tool pass a rigorous third-party risk assessment before provisioning. We implemented hard role-based access controls (RBAC) to ensure that only authorized personnel could access production-grade AI tools, and we deployed localized, private models for sensitive financial workloads. The results were immediate. Not only did we eliminate the data exfiltration vectors, but we also increased developer productivity by giving them a safe, sanctioned toolset.

We must also confront the raw reality of data breach economics. IBM's 2024 Cost of a Data Breach Report states that the global average cost of a data breach has surged to $4.88 million. Introducing unmanaged AI agents drastically accelerates the speed at which a breach can occur. You are effectively handing automated keys to your data kingdom. To secure your enterprise, you must require continuous monitoring and automated auditing of all AI-generated outputs.

When you formalize this structure, you transform AI from a massive liability into a defensible asset. Private equity buyers actively discount valuations for companies that cannot demonstrate control over their AI supply chain. Do not wait for a failed operational due diligence audit to force your hand. Secure your AI ecosystem right now, before the market punishes your negligence.