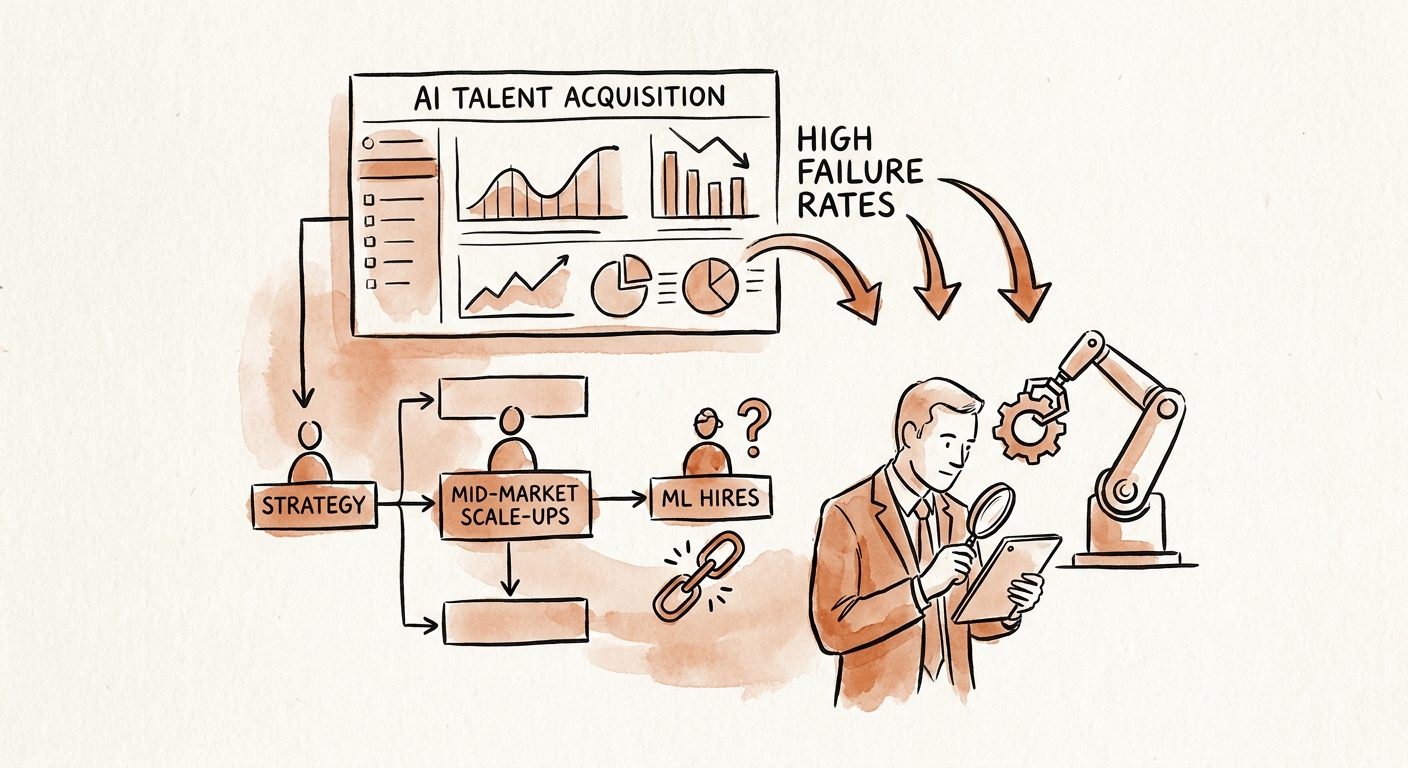

Founders are burning an average of $285,000 in sunk costs per failed Machine Learning engineer hire because they mistake theoretical algorithm knowledge for production-grade engineering competence. We are witnessing the most expensive misallocation of human capital in the history of enterprise software. CEOs and private equity operating partners are greenlighting massive compensation packages for candidates boasting advanced degrees and high Kaggle rankings, only to discover nine months later that these hires cannot deploy a single functional model into a live, scalable environment. I have watched product roadmaps stall completely because the technical leadership optimized their talent pipeline for mathematical theory rather than operational deployment capabilities.

In our last engagement auditing a Series C generative AI scale-up, I had to completely dismantle and rebuild their entire ML hiring pipeline from scratch. I discovered their $2.4M engineering payroll was entirely tied up in personnel who had never successfully pushed a model outside of a localized Jupyter notebook. This is what I call the notebook engineer trap, and it is destroying gross margins across the SaaS ecosystem. You are not hiring academics to publish papers; you are hiring engineers to ship commercial intelligence. The data clearly supports this failure in the market. According to Stanford HAI's 2024 AI Index Report, 72% of mid-market enterprises report a fundamental gap between their ML team's theoretical knowledge and their actual deployment readiness. This translates directly to delayed time-to-market and bloated infrastructure costs.

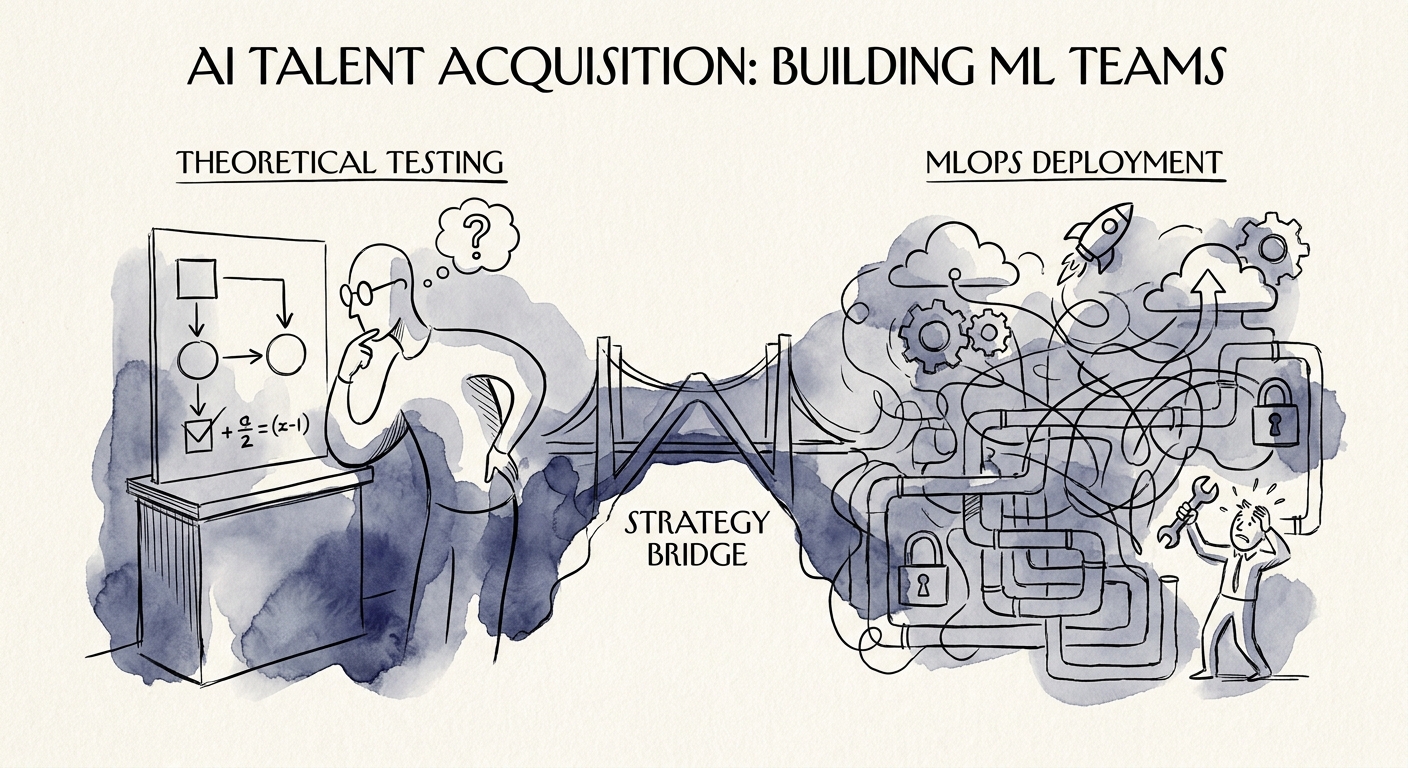

The root cause is a fundamental misunderstanding of what a modern AI practitioner actually does. Building the model is now the easiest part of the lifecycle; maintaining the data pipeline, optimizing inference compute, and establishing CI/CD for models is where the true value lies. You must stop screening for people who want to write novel neural networks from scratch. Instead, your hiring matrix must prioritize MLOps, containerization, and data engineering fundamentals. McKinsey's 2024 State of AI Report explicitly asserts that 65% of high-performing enterprise AI teams attribute their operational success strictly to MLOps and data engineering infrastructure, not core algorithm development. If your current job descriptions do not reflect this reality, you are actively inviting the wrong talent into your pipeline. For a deeper breakdown of this exact failure pattern, review our guide on Databricks Partner Talent Strategy: The $250k 'Notebook Engineer' Trap.

The Agentic Pivot: Screening for Production Over Theory

The standard technical interview for AI talent is entirely broken. Engineering leaders are still administering generic algorithm tests and white-boarding neural network architectures to screen candidates. This process reliably identifies academics, not operators. You must immediately deprecate theoretical screening in favor of deployment-centric evaluations. I mandate that every candidate interviewing for an ML engineering role at our portfolio companies must successfully containerize a pre-trained model and expose it via a secure API within a strict time limit. If a candidate possesses a PhD in machine learning but cannot write a functional Dockerfile or articulate how to handle model drift in production, we instantly terminate the interview process.

We are operating in a market where velocity dictates valuation. The average time-to-hire for an AI specialist is dragging your operational cadence to a halt. We see this quantified in Gartner's 2025 Tech Talent Compensation Benchmark, which tracks the average time-to-fill for senior ML roles hitting an agonizing 105 days. You cannot afford to spend a fiscal quarter hunting for a unicorn data scientist who checks 40 different theoretical boxes. You must hire for engineering rigor first. In fact, 82% of hiring managers who successfully scale their AI practices prioritize cloud-native deployment skills over novel architecture design, according to Bain & Company's 2025 Technology Report. Stop testing candidates on whether they can derive the math behind backpropagation. Test them on how they handle corrupted JSON payloads hitting their inference endpoints at 10,000 requests per minute.

To fix this pipeline, you must restructure your assessment framework. A highly predictive technical interview mirrors the exact day-to-day operations of the role. Give the candidate a dirty dataset, a tight latency requirement, and a broken deployment pipeline. Evaluate their pragmatism. Do they reach for the newest, most complex LLM framework, or do they deploy a simpler, highly performant gradient boosting machine that solves the business problem at a fraction of the compute cost? This operational pragmatism separates the true engineers from the researchers. For a step-by-step methodology on building these assessments, see our diagnostic on The Technical Interview That Predicts 90-Day Performance. You must filter aggressively for the ability to ship.

Compensation Architecture and Retention Defense

You cannot win a base-salary bidding war against Microsoft, Google, or Meta. Mid-market scale-ups that attempt to match FAANG compensation bands routinely obliterate their gross margins and ruin their SaaS quick ratios. Base salaries in this sector are escalating at an unsustainable velocity. According to the Bureau of Labor Statistics' 2025 Data Scientist Occupational Outlook, base wage inflation for specialized AI developers has surged 35% in just three years. If you attempt to buy this talent strictly with cash, you will bleed your runway dry before your first AI feature reaches general availability. Instead, you must architect compensation packages that leverage aggressive equity upside tied directly to actual product deployment milestones, effectively aligning the engineer's wealth creation with the company's enterprise value expansion.

We continually enforce a strict 60-day probation metric for new ML hires. If they haven't shipped a minor model update, deployed a data pipeline, or reduced inference latency in a staging environment by day 60, we trigger an immediate performance review. You cannot allow high-priced AI talent to sit in a research silo. We counter the retention risk not by padding the base salary, but by offering technical autonomy, rapid deployment cycles, and equity refreshers tied to gross margin improvements generated by their models. When you hire an engineer and trap them in six months of compliance reviews and data governance committees, they will quit. The primary retention lever for top-tier ML talent is the velocity at which they can put code into production and see real-world impact.

Do not underestimate the financial devastation of getting this wrong. The collateral damage of a bad AI hire extends far beyond their salary. It encompasses recruiting fees, wasted compute clusters, disrupted agile sprints, and the massive opportunity cost of delayed roadmaps. We break down these exact downstream impacts in The $240,000 Mistake: Calculating the True Cost of a Bad Tech Hire. Your talent acquisition strategy for AI must evolve from passive sourcing to rigorous, production-first filtering. You are building an engineering organization, not a university laboratory. Treat every ML hire as an investment in operational leverage, measure their output exclusively by shipped products, and fire the ones who refuse to leave the notebook.