The 'Paper Tiger' Problem in Databricks Partner Ecosystems

For Scaling Sarah, the CEO of a growing data consultancy, the pressure to climb the Databricks partner tiers is immense. To move from 'Select' to 'Elite,' you need badges. You need a specific number of Databricks Certified Machine Learning Associates and Professionals to unlock the co-sell engine. But this compliance-driven hiring strategy is creating a dangerous operational liability: the 'Notebook Engineer.'

A 'Notebook Engineer' is a candidate who has mastered the art of passing the certification exam and can write functional Python code in a Jupyter notebook or Databricks Workspace. In a sandbox environment, they look like a $250,000 asset. In a production environment, they are a liability. They often lack the software engineering rigor—CI/CD, unit testing, modularization, and infrastructure-as-code—required to deploy robust ML systems.

According to 2025 data, while the average compensation for a Databricks-focused ML Engineer has stabilized around $233,000 for mid-level roles, the failure rate for these hires remains alarmingly high. Industry reports indicate that nearly 80% of ML hires fail to meet expectations within their first year. Why? Because you hired them to pass a partner audit, not to ship production code. The result is a 'Paper Tiger' team: impressive on your partner portal profile, but incapable of delivering the margin-rich managed services that drive profitable utilization.

The Economics of an ML Practice: Margin Killers

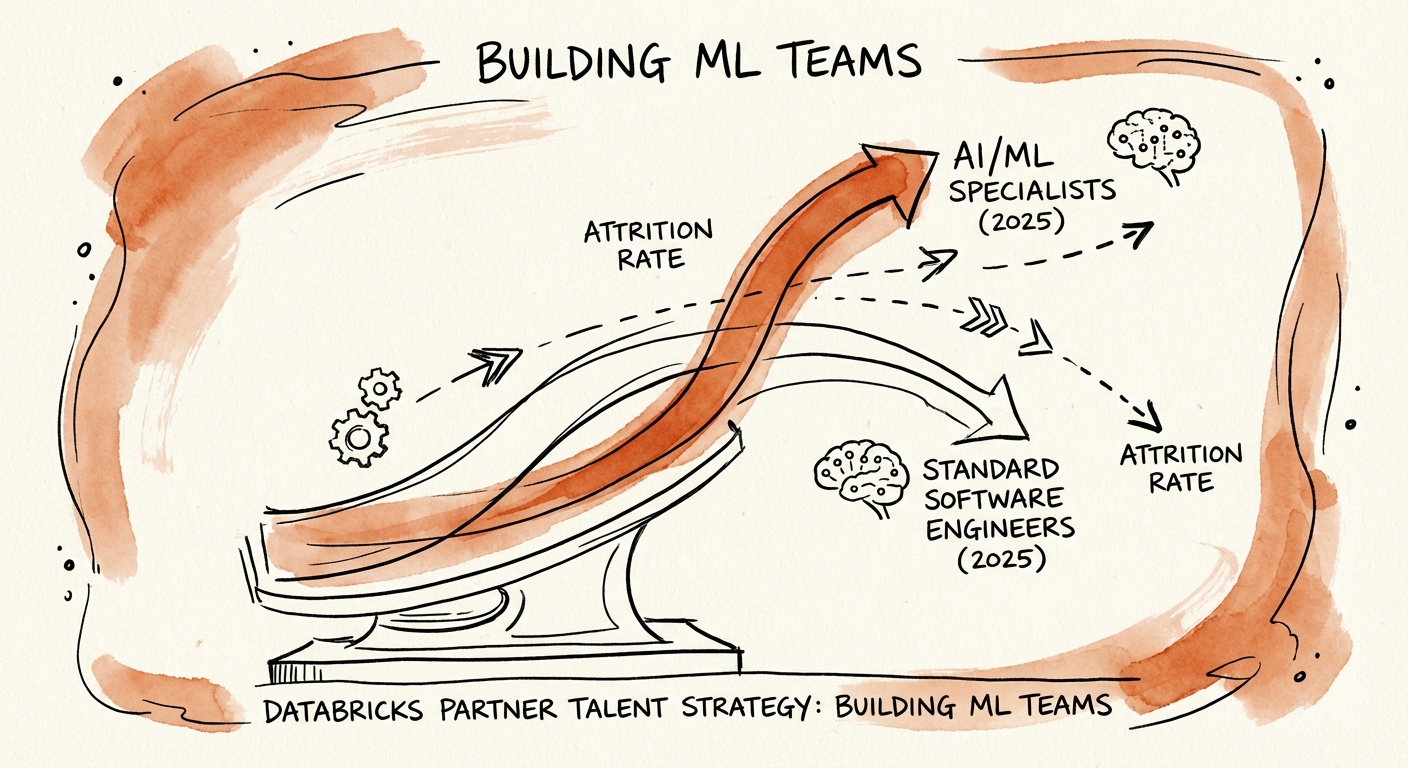

Building an ML practice is not like building a CRM implementation practice. In the Salesforce or HubSpot world, you can leverage a pyramid structure: one expensive Architect overseeing five cheaper Associates. In the Databricks ecosystem, specifically for ML and GenAI projects involving MosaicML and Unity Catalog, the technical floor is significantly higher.

If you attempt to staff a project with 'Associate' level talent to protect your margins, you risk project failure. Conversely, if you stack the team with 'Professional' level talent commanding $250k+, your blended rate becomes uncompetitive against Global Systems Integrators (GSIs). This is exacerbated by attrition. The 2025 attrition rate for AI/ML talent sits at 28%, significantly higher than the 17% average for standard software engineers. Every time an ML engineer walks out the door, it costs you roughly 2x their salary in replacement costs, lost billings, and recruiting fees.

The Certification Premium vs. Value

Our analysis of partner certification economics shows a similar trend to the Workday ecosystem: the most 'certified' consultants often have the lowest utilization because they are too expensive for run-rate work but lack the architectural maturity for high-stakes advisory. To fix this, you must decouple your 'Partner Compliance' strategy from your 'Delivery' strategy. Hire a core team of true engineers to deliver, and use a separate, lower-cost tier of junior staff to grind out the certifications needed for partner status—but do not put those juniors on critical path delivery without heavy oversight.

The 'Production-First' Hiring Protocol

To stop bleeding cash on bad hires, you must overhaul your technical assessment. Standard coding challenges (like LeetCode) are useless for assessing Databricks talent. Instead, your interview process must simulate the specific pain points of modern Data & AI consulting.

- Test for Modularization: Give the candidate a messy, 500-line monolithic notebook and ask them to refactor it into deployable Python modules. If they can't do this, they aren't an engineer; they are an analyst.

- Test for MLOps: Ask specifically about their experience with MLflow beyond just 'logging parameters.' Can they design a model registry workflow that handles promotion from Staging to Production?

- Test for Unity Catalog: In 2026, governance is the product. A candidate who doesn't understand the security implications of the Lakehouse architecture is a risk to your enterprise clients.

Finally, stop hiring for the 'Generic ML' profile. The market has bifurcated. You either need Infrastructure Engineers who can build the platform (Terraform, Kubernetes, Databricks Asset Bundles) or GenAI Application Engineers who understand vector databases and RAG architectures. The 'middle ground' data scientist who just builds models is becoming obsolete. By implementing a performance-predictive interview process, you can filter out the 'Paper Tigers' and build a team that protects your reputation and your margins.