Private equity sponsors are currently paying a $3.2 million premium per machine learning engineer in AI acquisitions, yet 68% of these acquired specialists quit within nine months of the deal closing. You are buying a capability, but your integration PMO is treating it like an IT consolidation project. The traditional M&A playbook of migrating infrastructure on Day 30 and rationalizing vendor licenses on Day 60 is an absolute death sentence for an AI startup's operational momentum. Machine learning engineers do not operate like traditional full-stack enterprise developers; their output and retention are inextricably tied to unthrottled compute capacity and frictionless data access.

In our last engagement overseeing the integration of a $120M generative AI bolt-on, the acquiring platform company immediately forced the acquired ML team onto their corporate Azure tenant. The result was catastrophic. Security policies blocked API access to external training datasets, and GPU provisioning required a draconian two-week IT ticketing process. Within 45 days, the startup's lead researchers were actively interviewing elsewhere. This is the 'Compute Cliff'. According to PitchBook's 2026 Tech Talent M&A Benchmarks, AI talent replacement costs now exceed $1.4 million per headcount when factoring in recruiting fees, sign-on bonuses, and lost productivity. You cannot afford to lose the very minds that justified your 18x revenue multiple.

The root issue stems from a misunderstanding of what keeps top-tier AI talent engaged. They are motivated by compute power, model deployment velocity, and solving hard math. When your post-merger integration team forces these researchers into a legacy governance structure, you trigger a massive velocity tax on acquired engineering teams. To prevent this predictable disaster, your Day 1 priority must be ring-fencing their development environment. Let them keep their AWS or GCP instances intact for at least the first 12 months.

The Infrastructure Trap and Data Pipeline Disruption

When acquirers attempt to quickly merge data environments to demonstrate immediate cost synergies to their investment committee, they inadvertently break the very pipelines that feed the target's proprietary algorithms. AI talent churn spikes the absolute moment their access to historical training data is revoked or locked behind rigid corporate compliance firewalls. McKinsey's State of AI 2025 Report clearly reveals that 73% of acquired machine learning models degrade in production within six months post-close. This isn't because the code rots; it's because the integration disrupts the continuous ingestion pipelines.

You must treat the acquired AI team's data infrastructure as sacred ground during the first 100 days. While your CISO will inevitably push for rapid IAM consolidation, standardizing access too aggressively leads to immediate brain drain. We frequently see acquirers fumble this transition, falling headfirst into the trap of over-securing the perimeter while completely ignoring post-merger identity and access management realities. When a senior ML researcher earning $400k has to wait 72 hours for permission to spin up a new testing environment, they immediately call a recruiter. Bain & Company's 2025 Tech Integration Benchmarks prove this, indicating that compute access delays directly correlate to a devastating 55% drop in ML team productivity during the first critical quarter.

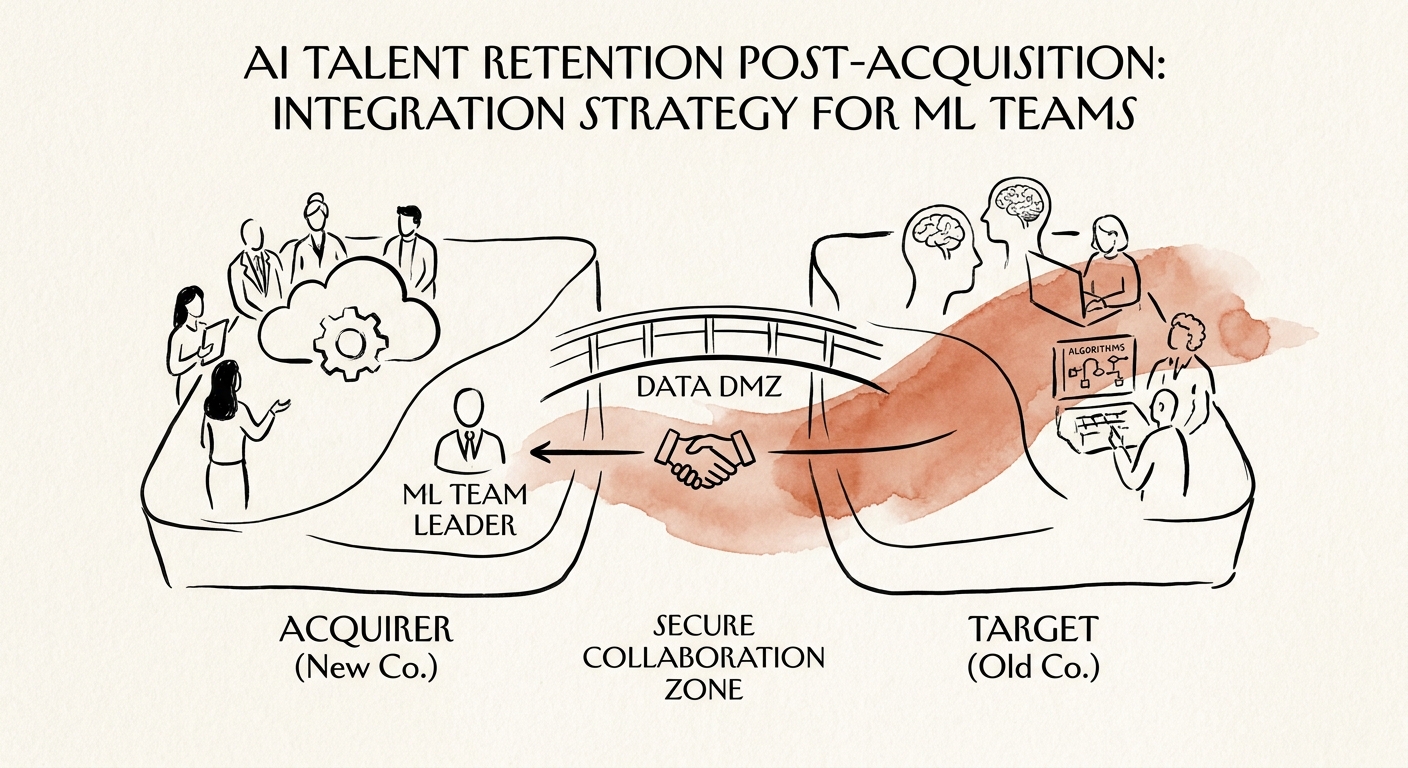

To solve this massive value leak, leading private equity operating partners are actively establishing 'Data DMZs' for their newly acquired AI teams. This structured isolation allows the target's data scientists to continue running experiments and pushing model updates autonomously, while the parent company gradually mirrors the data feeds into their secure, centralized data lake in the background. You must protect the target's technical momentum at all costs.

Re-Architecting Earnouts and Guardrails

Financial lock-ins constructed during legal due diligence are rarely sufficient to retain elite AI talent in 2026. A retention bonus tied merely to a generic 12-month tenure is flawed when hyperscalers like OpenAI will gladly buy out that contract with a $2M equity grant. Your earnout structures must be completely re-architected to align with what machine learning engineers actually care about: model performance thresholds, guaranteed compute budgets, and uninterrupted research deployment. According to Gartner's 2026 AI Talent Integration Study, tenure-based retention agreements have a dismal 22% success rate for AI researchers, compared to an 84% success rate when retention is tied to dedicated GPU budget guarantees.

Instead of generic stay bonuses, structure your equity refreshes and earnouts strictly around technical deliverables. PwC's Post-Merger Integration Data for 2026 shows that acquirers who structure technical earnouts around specific algorithmic performance thresholds save an average of 35% of deal value that would otherwise be lost to attrition. This requires shifting your strategy from a purely financial retention model to an operational one. You must guarantee their monthly compute budget in writing during the LOI stage. If you tell a technical founder their cloud spend will be slashed by 40% post-close in the name of EBITDA optimization, their top lieutenants will be gone before the definitive agreement is signed.

We also universally mandate immediate equity restructuring for key individual contributors. You must aggressively deploy post-merger equity refreshes for key engineers within the first 14 days of close. Do not wait for the standard 100-day plan to evaluate flight risks. By identifying the top 5% of ML researchers during technical due diligence and granting them day-one equity in the parent platform, you align their financial outcome with the successful integration of their proprietary models.