Acquirers are currently discounting "proprietary" AI intellectual property by up to 60% in due diligence because what founders claim is a differentiated model is almost always just an API wrapper around a commercial LLM fed by an unstructured, poorly governed database.

We are officially past the peak of the artificial intelligence hype cycle in private equity M&A. Buyers are no longer paying blind 15x to 20x revenue multiples for companies simply because they have ".ai" in their domain or mention machine learning in the CIM. The reality is that Gartner's 2026 AI Asset Valuation Guide shows 75% of generative AI application companies are seeing their valuations compress back to standard SaaS metrics (6x to 8x EBITDA) during the quality of earnings and technical diligence phases.

In our last sell-side engagement for a Series C artificial intelligence platform, I had to completely restructure the data room after the initial buyer meetings. We ended up stripping out a combined $85 million in projected enterprise value because the target's "proprietary training data" was heavily reliant on improperly licensed, web-scraped content. The buyer's counsel flagged the copyright exposure, and the model's intangible value went to zero overnight.

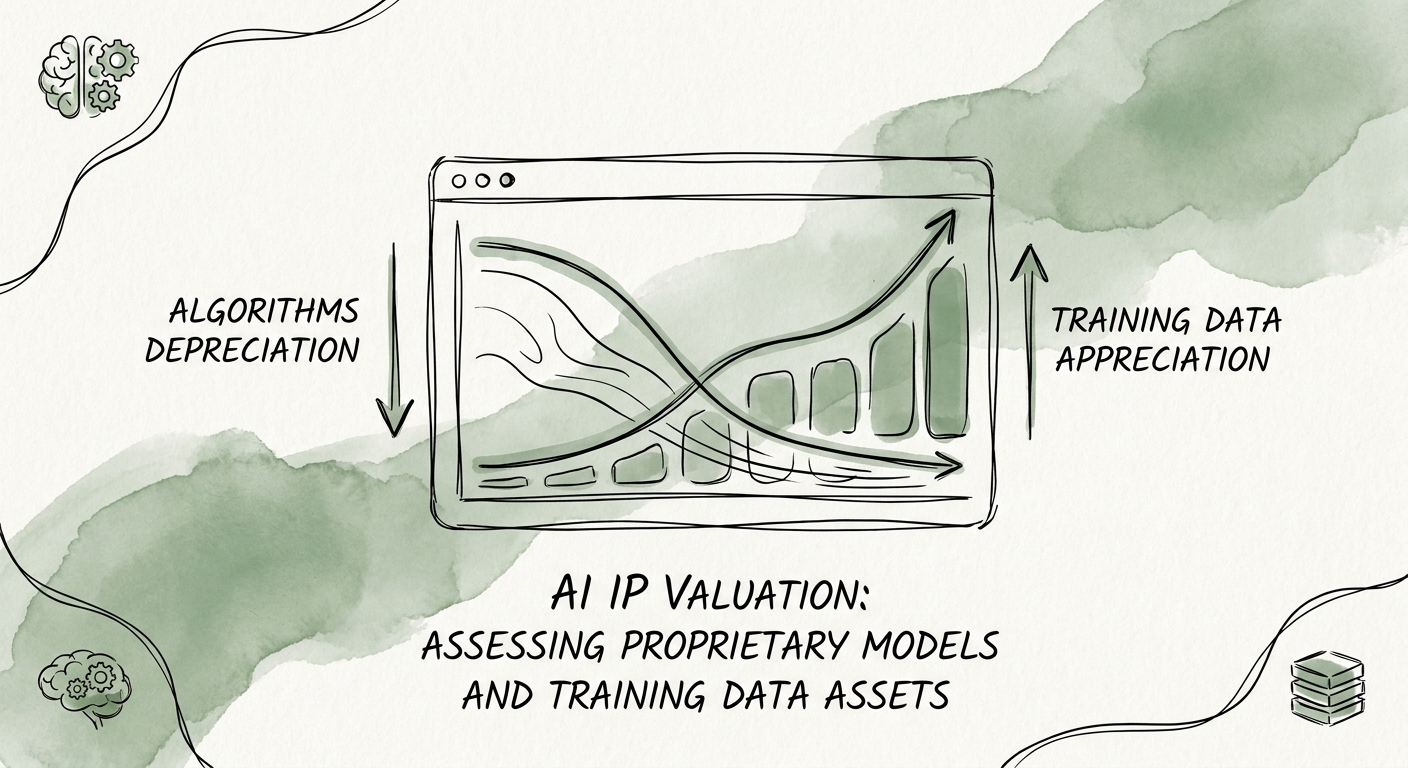

To command a true premium in 2026, founders and operating partners must understand that algorithms are depreciating assets. The models themselves are becoming commoditized via massive open-source competition. The defensible moat—the asset that actually drives premium exit multiples—is exclusively found in the proprietary, legally clean, domain-specific training data and the deep workflow integration that captures continuous human-in-the-loop feedback.

If you are preparing for a transaction, you must properly segment your technology stack. For more on structuring this narrative, review our specific playbook on how to value proprietary data assets in tech acquisitions.

Training Data Provenance: The New Quality of Earnings

In traditional SaaS M&A, financial sponsors obsess over the Quality of Earnings report. In AI M&A, the technical diligence team obsesses over the Quality of Data. If you cannot prove the provenance, lineage, and licensing rights of every terabyte of data used to fine-tune your proprietary models, the buyer will assign that intellectual property a value of exactly zero.

This is not a theoretical legal risk meant to scare founders. PwC's 2026 Tech Due Diligence Benchmark reveals that a staggering 40% of AI-focused M&A deals face significant valuation haircuts specifically due to data provenance and model drift issues uncovered during technical diligence. Institutional buyers are terrified of acquiring a platform only to face a catastrophic copyright infringement lawsuit or discovering that the foundational model is irrevocably poisoned by toxic training sets.

Conversely, completely clean data commands a massive premium. According to McKinsey's 2025 M&A Data Asset Analysis, software companies possessing legally ring-fenced, proprietary datasets drive an average 2.5x multiple expansion compared to peers relying on synthetic or open-source data. This premium exists because unique data creates a self-reinforcing flywheel effect that competitors cannot replicate simply by spinning up new GPU instances.

You must rigorously audit your models for this specific exposure profile. If open-source GPL code or copyrighted content has contaminated your training pipelines, the remediation cost is not just a software patch—it often requires burning the entire model to the ground and retraining it from scratch. I strongly recommend executing a rigorous intellectual property audit checklist for AI/ML acquisitions at least twelve months before going to market.

Furthermore, you must account for the rapid depreciation of the mathematical logic. MIT Sloan's 2025 Generative AI Asset Depreciation Study found that the competitive advantage of a purely algorithmic foundational model now has a half-life of just 14 months. If your entire exit narrative relies on having "the best algorithm," your valuation will decay before the transaction even closes.

Structuring the AI IP Data Room to Defend the Multiple

To survive the intense scrutiny of a private equity deal team in 2026, your virtual data room must treat AI assets with the exact same rigor as financial liabilities. You cannot simply upload a high-level cloud architecture diagram and a list of API endpoints. Buyers demand empirical proof of the model's operational efficacy, unit cost-to-serve, and structural stickiness within the customer base.

The most sophisticated acquirers know that the term "AI" does not automatically equate to "high margin." In fact, sloppy inference costs routinely destroy unit economics. You must provide clear dashboards demonstrating that your AI compute costs scale sub-linearly with revenue growth. If your gross margins hover around 60% because of massive cloud GPU expenditures, you are not a high-value software company; you are acting as a low-margin compute reseller.

Additionally, you must empirically prove that the artificial intelligence actually embeds your product deeper into the customer's daily operations. EY's 2026 Intellectual Property Valuation Index highlights that 80% of perceived AI enterprise value is actually derived from workflow integration lock-in, rather than any underlying algorithmic superiority. The intelligent platforms that command 14x multiples are the ones that live in the critical path of the user's daily tasks, passively collecting proprietary telemetry data to self-improve without human intervention.

When presenting your platform architecture, be aggressively transparent about what is custom and what is purely commoditized. Identify the exact technical boundaries between foundational open-source models, your proprietary fine-tuning layers, and your workflow integration logic. Do not fall into the trap of over-engineering the data stack just to appear artificially sophisticated, a phenomenon we actively dismantle in our analysis of the modern data stack trap and Snowflake technical liabilities.

Ultimately, valuing AI intellectual property in 2026 requires aggressively separating the marketing from the math. Build your defense around the unique data assets you unconditionally own, the human-in-the-loop workflows your application controls, and the provable, clean lineage of your repositories. That is the only viable path to protect your exit multiple when the technical auditors arrive.